Game development AI in 2026 is no longer a single tool you bolt onto an engine; it is a stack — a pipeline of seven AI primitives that together turn one paragraph of design intent into a playable browser game with original art, original audio, and original code. Sorceress ships every layer of that stack inside one suite, which is why this guide reads like a tour of a stack rather than a list of disconnected products. The seven pillars below cover everything an indie team actually needs to ship: the coding agent, the visual generation layer, the 2D sprite pipeline, the 3D character pipeline, the voxel pipeline, the audio pipeline, and the publish-and-iterate layer. Each pillar is mapped to the specific Sorceress tool that owns it, with the verified model lineup, capability scope, and credit costs. Verified May 12, 2026.

The game development AI stack at a glance

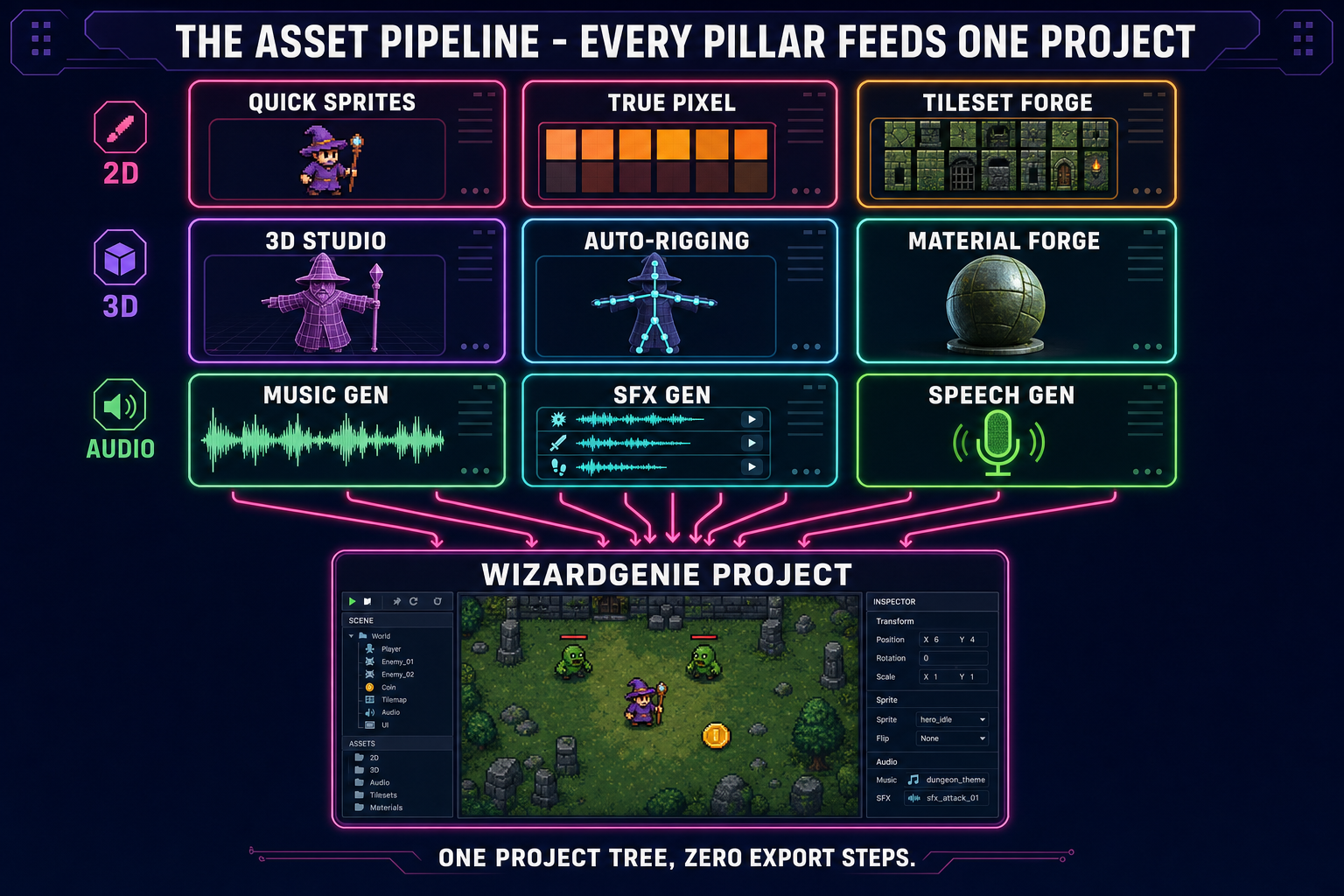

Before walking each pillar in detail, the overview. The Sorceress game development AI stack is organised around the actual order an indie team ships a game in — coding first because the project shape decides everything that follows, then visual generation because the art identity decides which sprite and 3D pipelines apply, then the asset layers in genre order, then audio, then the publish-and-play loop. The seven pillars and the one-line case for each:

- The coding layer. An AI agent that writes, runs, and iterates on the game in a browser tab. WizardGenie + Sorceress Code drive every leading frontier coding model and emit playable Phaser 4.1 / Three.js r184 projects from a single prompt.

- The visual generation layer. Every flagship image and video model in one panel. AI Image Gen covers stills; AI Video Gen covers motion. Together they feed every downstream art pipeline.

- The 2D sprite and tile layer. Quick Sprites, Auto-Sprite v2, True Pixel, Tileset Forge, and Seamless Tile Gen turn AI art into game-ready 2D sprites and tilemaps.

- The 3D character and world layer. 3D Studio + Auto-Rigging + Procedural Walk + Material Forge ship a rigged, animated 3D character from a single 2D reference, plus full PBR materials.

- The voxel layer. Voxel Studio turns prompts, images, or 3D models into rigged voxel creations — the only stack we ship for blocky-aesthetic games.

- The audio layer. Sound Studio bundles Music Gen, SFX Gen, Speech Gen, and SFX Editor. Every audio asset a game needs — music, SFX packs, NPC voices, mastered clips — without leaving the browser.

- The publish and iterate layer. Push the finished game to the Sorceress Arcade or GitHub Pages, preview it on every screen size with Layout Preview, and surface it in front of other vibe coders on the Play Arcade. Closes the loop.

Read the rest of this post as a top-down tour of the stack — what each pillar does, the specific tool that owns it inside Sorceress, the credit cost where it matters, and which other pillar each one feeds into. Cross-links use ?ref=blog so the dashboard can attribute the pipeline-to-tool funnel.

What “game development AI” actually means in 2026

Two narrower phrases hide behind the umbrella term and they often get conflated. The first is the coding side — an agent that writes the game’s logic, scenes, physics, input, and rendering glue from a natural-language prompt. The second is the asset side — AI models that generate the sprites, the 3D meshes, the music, the SFX, and the voiceover the agent then loads at runtime. A real game development AI stack covers both, because shipping the code without the assets gives you a coloured-square prototype and shipping the assets without the code gives you a folder of files no one can play.

The 2026 version of the stack is also browser-native and model-agnostic. Browser-native because installing an engine is a friction step indie teams skip; model-agnostic because the per-token cost of frontier reasoners makes single-model pipelines uneconomical past the prototype phase. Both points show up in every pillar below. The coding layer drives eight frontier models behind a single picker; the 2D layer accepts art from any image model with a reference-image input; the 3D layer routes between seven image-to-3D providers depending on the source. The principle: any one model is a temporary best-in-class — the stack outlives the model lineup.

The Sorceress catalog under /tools-guide lists every tool by group. The seven pillars below collapse those groups into the order an actual project uses them. Verified against src/app/_home-v2/_data/tools.ts and the per-tool page components on May 12, 2026.

1. The coding layer — WizardGenie and Sorceress Code

The agent that writes the game. WizardGenie at /wizard-genie/app is the AI-native game engine at the heart of Sorceress — describe the game, the agent writes the file tree, runs the dev server, observes the output, and iterates. The model picker drives eight frontier coding models: Claude Opus 4.7, Claude Sonnet 4.6, GPT-5.5, Gemini 3.1 Pro, DeepSeek V4 Pro, Kimi K2.5, Grok 4.2, and MiniMax M2.7. Bring-your-own-key is the unlimited path on every model; the trial chip in the header tracks the free DeepSeek V4 Flash pool every account starts with.

WizardGenie ships in two flavours. The web build runs at /wizard-genie/app with no install, and the Windows desktop build ships native filesystem access plus longer-running agent sessions for supporters. Both builds emit projects on Phaser 4.1 for 2D and Three.js r184 for 3D. The dual-agent Planner+Executor mode pairs a frontier reasoner (Opus 4.7, GPT-5.5, Gemini 3.1 Pro, Grok 4.2) with a budget executor (DeepSeek V4 Pro, Kimi K2.5, MiniMax M2.7) and lands the per-session cost at roughly one-fifth of single-frontier on the same project.

Sorceress Code at /code is the lighter-weight coding agent for non-engine code — tooling scripts, build pipelines, asset converters. The two products share a model picker but split the workload: WizardGenie owns the gameplay loop, Sorceress Code owns the surrounding plumbing. The full per-model deep dive is in the best AI model for coding write-up, and the four pipeline shapes — one-shot, dual-agent, asset-first, genre-template — are walked in the prompt-to-game pipelines guide.

2. The visual generation layer — AI Image Gen and AI Video Gen

The art-source layer. AI Image Gen at /generate drives every flagship image model from one panel: Nano Banana Pro and Nano Banana 2 (Google), GPT Image 2 (OpenAI), Seedream 5 Lite (uncensored), Flux 2 Pro (Black Forest Labs), Z-Image Turbo, and Grok Imagine. Reference-image input on every model means character consistency across an entire game is a workflow, not a happy accident — the stay-on-model character guide walks the recipe.

The companion video panel at /video covers motion. Eight video models in the picker: Grok Imagine Video, Wan 2.7 (uncensored), Seedance 2.0, Seedance 2.0 Fast, Wan 2.2 Fast, Seedance 1.5 Pro, Kling 3.0, and Kling 2.5 Turbo Pro. Image-to-video and text-to-video both supported, with end-frame control on the latest Seedance and Kling models. The video output feeds two downstream pillars: pillar 3 (Auto-Sprite v2 turns video into sprite sheets) and pillar 7 indirectly (cinematic trailers for the published game). The cinematic-cutscene workflow is in the cinematic AI animation guide.

Two utility tools sit inside the same group and matter for the asset pipeline. BG Remover turns any image into a transparent PNG — mandatory before any sprite-sheet step. Image Expander outpaints AI art beyond its original borders, which is how parallax backgrounds and key art get made from a single generation. The background remover guide walks the sprite-prep recipe.