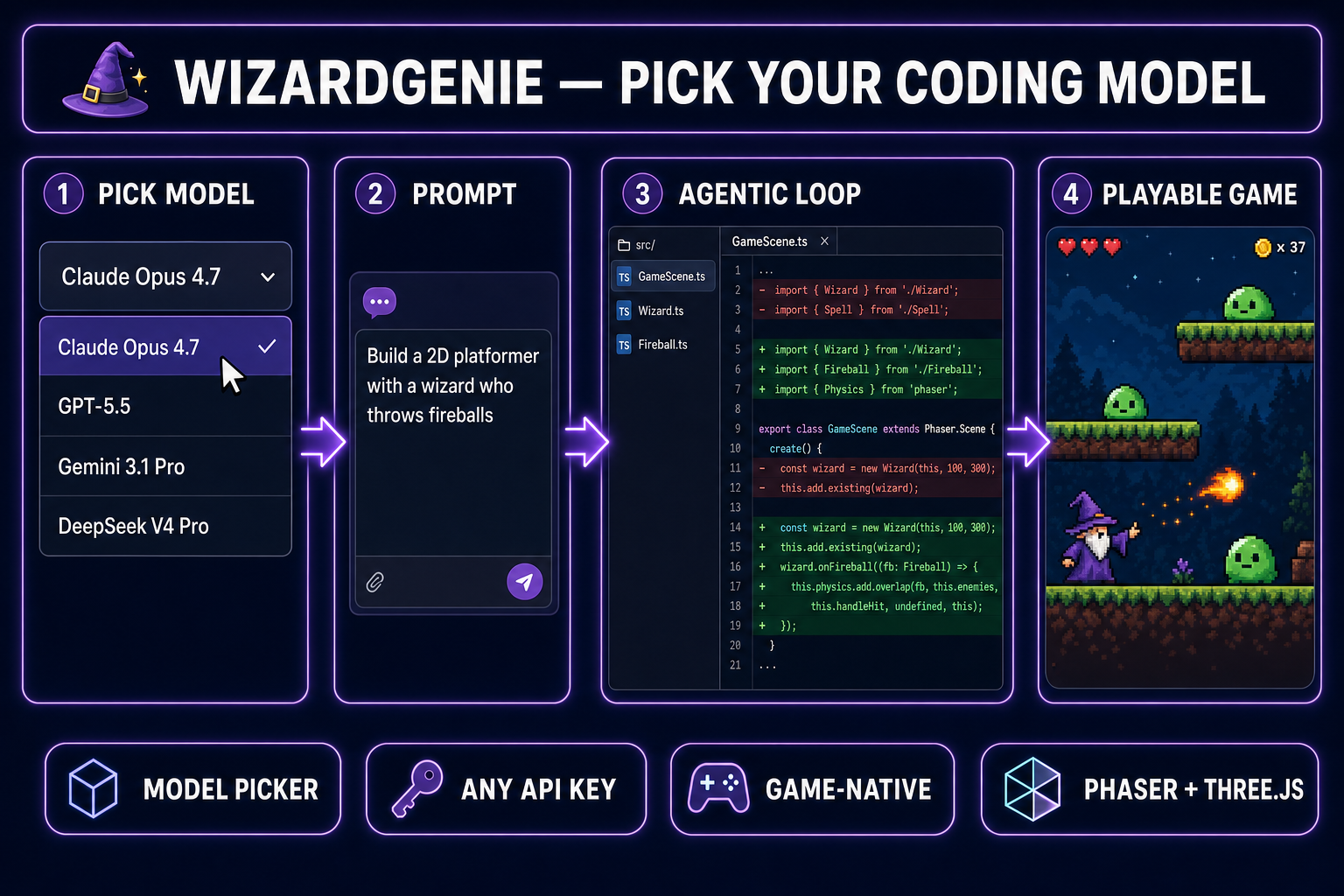

The best AI model for coding in 2026 isn’t a single answer — it’s eight serious models on the table, each better at a different job. Some are brilliant at agentic refactors but slow and expensive. Others are fast and cheap but make brittle calls when the codebase gets weird. WizardGenie bundles all eight behind one model picker, which means you can stop arguing about which model is “best” in the abstract and start asking the only question that matters: best at what?

This is the answer for game dev specifically — built and rebuilt over hundreds of agent sessions targeting Phaser, Three.js, and the kind of tight gameplay loops you ship in a jam. Every claim below was tested by running the same task across every model in WizardGenie. Every model name, version, and capability matches what is actually in the model picker today.

The verdict

- Best overall for game dev: Claude Opus 4.7 — the model that actually understands “this is a 2D platformer and I want forgiving wall-jumps.”

- Best for one-shot vibe coding: GPT-5.5 — commits to a direction without three rounds of clarifying questions.

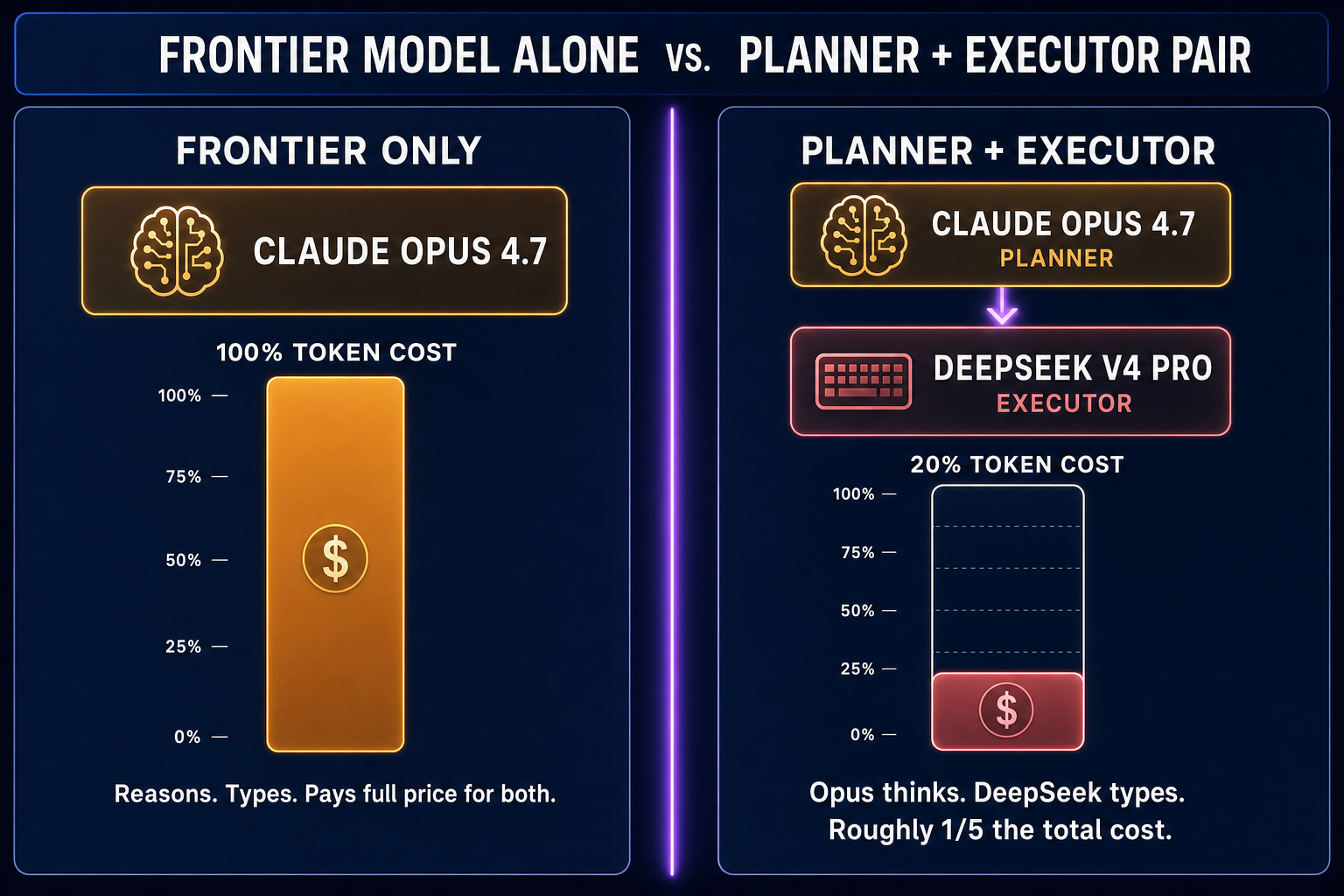

- Best executor in a Planner+Executor pair: DeepSeek V4 Pro — roughly one-fifth the per-token cost of a frontier model and fast enough to keep the loop tight.

- Best for huge codebases: Grok 4.2 — 2M context window means the agent can hold every file at once.

- Cheapest realistic path: the free DeepSeek V4 Flash trial built into WizardGenie, then bring your own DeepSeek key for unlimited use.

How we tested (game dev only, no SaaS-bench cosplay)

Public coding leaderboards mostly score models on standard software-engineering benchmarks. That isn’t what game dev needs. A model can ace a LeetCode-style problem and still write a Phaser scene with the camera glued to the wrong sprite. So the test was deliberately game-shaped:

- One-shot vibe-code task: “Build a 2D platformer with a wizard who throws fireballs at slimes. Three platforms, a death pit, a score counter. Phaser 3, single HTML file, runnable now.” Each model got the exact same prompt; we timed how long until something playable appeared and how many bugs were left.

- Iterative refactor task: Give every model the same 600-line Three.js mini-game and ask it to add a particle system on enemy death. Counted: did it understand the existing scene graph, did it break anything, did it pass build cleanly.

- Agentic loop endurance: 30-turn sessions where the user makes a stream of small “make it feel better” requests — tighter jumps, snappier shots, faster respawn. Counted: how many rounds before the model started losing track of state.

Same prompts. Same starting conditions. Eight runs. The picker order below is the result — it isn’t an opinion, it’s a workload-specific ranking.

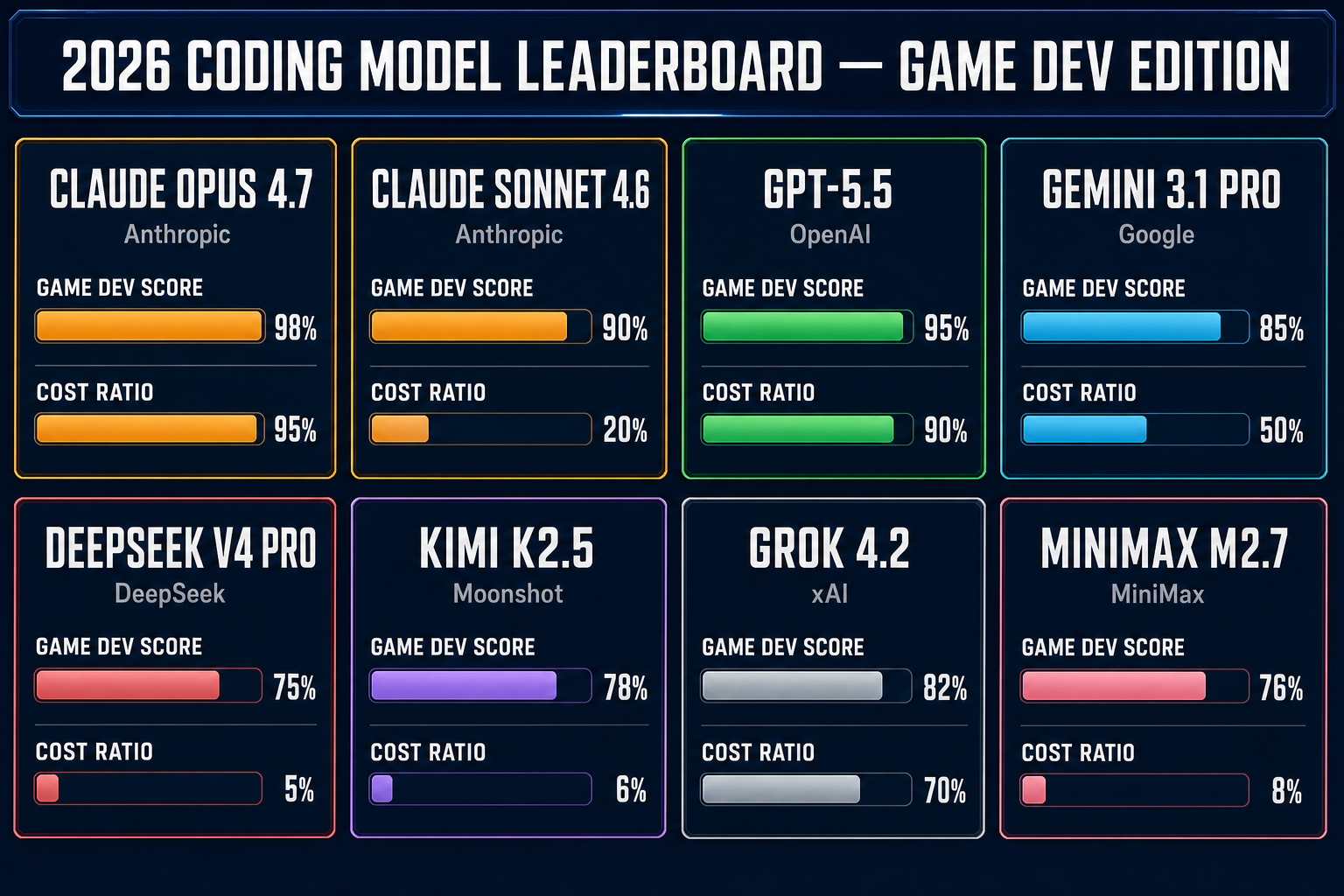

The best AI model for coding, ranked (2026 leaderboard)

Every model in this table is currently selectable in the WizardGenie picker. Tiers are calibrated to the model’s behavior on the three game-dev tasks above. The “ratio” column is the typical per-token API cost relative to the frontier (Opus 4.7 ≈ 1.0). Treat ratios as orders of magnitude — exact pricing shifts month to month and you should always check current rates from your provider before a long session. Reading this as “what is the best AI model for coding right now” — the answer is in the row labels, but the right answer for your session depends on which task you’re about to ask it to do.

| Model | Tier | Best at | Cost ratio | Context |

|---|---|---|---|---|

| Claude Opus 4.7 | Frontier reasoner | Multi-step game-design thinking | ~1.0× | 200K |

| Claude Sonnet 4.6 | Smart + fast | Iterative refactors mid-session | ~0.2× | 200K |

| GPT-5.5 | Frontier generalist | One-shot vibe code, no second-guessing | ~0.9× | 400K |

| Gemini 3.1 Pro | Long-context champ | Holding entire repos in head | ~0.5× | 1M |

| DeepSeek V4 Pro | Budget workhorse | Cheap fast typing in a Planner+Executor pair | ~0.05× | 128K |

| Kimi K2.5 | Coding specialist | Mid-budget refactors with 256K coding context | ~0.06× | 256K |

| Grok 4.2 | Massive context | 2M-token codebases without summarization | ~0.7× | 2M |

| MiniMax M2.7 | Agent-tuned | Tool use and multi-step planning at low cost | ~0.08× | 256K |

Best for one-shot vibe coding: GPT-5.5

“Vibe coding” is a real category — you describe a thing, the model commits to a direction, you steer with feel rather than spec. The model has to make a hundred small unspecified design decisions on its own without nagging you for clarifications. GPT-5.5 wins this clearly.

On the platformer-with-fireballs prompt, GPT-5.5 produced a playable build in one shot — Phaser scene, sprite placeholders, gravity, collision, a slime that dies on fireball impact, a working score counter. Total wall time around forty seconds. It picked Phaser 3, picked an arcade-physics body for the wizard, picked a 320×180 internal resolution scaled up — all without asking, all sensible.

Claude Opus 4.7 also produced a playable build first try, but tended to ask one clarifying question first (“would you like the slimes to respawn or stay dead?”). That’s actually the right instinct on a serious project; it’s the wrong instinct when you just want to feel out a vibe in five minutes. Use Opus when you have time to steer; use GPT-5.5 when you want momentum.