If you’ve been wondering how to make a video game with AI but never wrote a line of code, the answer used to be “go learn Unity for six months.” That isn’t the answer anymore. AI coding agents can now design, write, run, and debug a real game while you describe what you want in plain English. This guide walks through exactly how to do it — from a one-sentence idea to a game you can actually play — in well under an hour.

The whole workflow runs in a single editor. No SDK juggling, no command-line setup, no learning a proprietary scripting language first. The only thing you need is a free Sorceress account and an idea.

How to make a video game with AI: what you’ll need first

One free account on WizardGenie, the AI-native game engine inside Sorceress. That’s the whole shopping list. No Unity install. No Godot install. No GitHub account. No graphics tablet. No paid AI subscription on day one — WizardGenie ships with a fallback trial key so you can prove the workflow before you pay anyone for tokens.

If you want to hand-pick which AI model writes your game’s code, WizardGenie supports every flagship coding model in a single drop-down: Claude Opus 4.7 and Claude Sonnet 4.6, GPT-5.5, Gemini 3.1 Pro, Grok 4.2, DeepSeek V4 Pro, Kimi K2.5, and MiniMax M2.7. Bring your own API key, pay providers directly, no markup or middleman.

Step 1 — Open WizardGenie (web or desktop)

WizardGenie is available two ways and you pick what works for you:

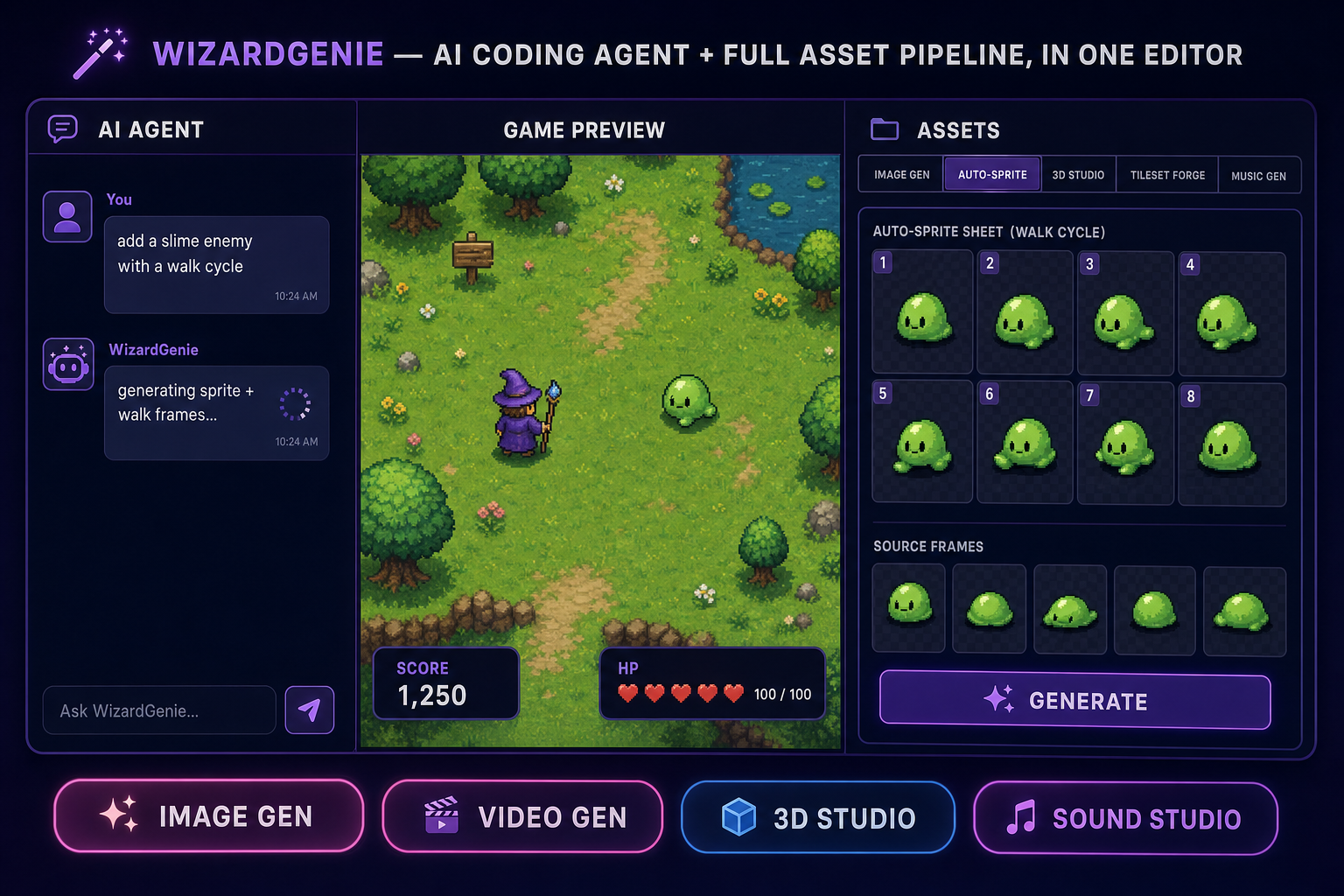

- Web app. Go to Sorceress.games/wizard-genie/app. A real game editor loads inside the browser tab — chat panel on the left, live game preview in the middle, tool palette on the right with image generation, music generation, sprite tools, and 3D assets all built in. Zero install.

- Desktop app. A Windows installer with auto-updates, native filesystem access, and longer-running agent sessions. Built for serious project work — keep your files on your machine, run the agent for hours at a time, and stay productive offline once the project is loaded. Get it on the WizardGenie page.

Both versions run the same agent on the same project format, so you can start in the browser, install the desktop app later, and pick the project up where you left off.

You don’t have to configure anything on first open. WizardGenie bootstraps a clean Phaser project (for 2D) or Three.js project (for 3D) so the agent has somewhere to write code. From here on the AI does the heavy lifting.

Step 2 — Describe the game you want, in plain English

Type your game idea into the chat box. The trick is to write the way you’d describe a game to a friend, not the way you’d write a spec doc. Examples that work well:

- “A side-scrolling platformer with a wizard who shoots fireballs at goblins.”

- “A top-down RPG where you walk around a forest and fight slimes.”

- “A puzzle game where you push blocks onto switches to open doors.”

- “A space shooter — the player flies a ship at the bottom of the screen and asteroids fall from the top.”

Keep your first prompt short. The agent’s first job is to lock in a genre and a player loop, not to ship the whole game in one shot. You’ll layer details in over the next few prompts. If you ask for too much at once, you’ll spend more time un-doing things than building forward.

Step 3 — Let the AI agent write and run the code

WizardGenie reads your prompt, picks the right framework (Phaser for 2D, Three.js for 3D), and starts writing actual TypeScript. Files appear in the file tree as the agent works. Within 30–60 seconds the agent hot-reloads the preview and you can play the first version of your game in the middle panel.

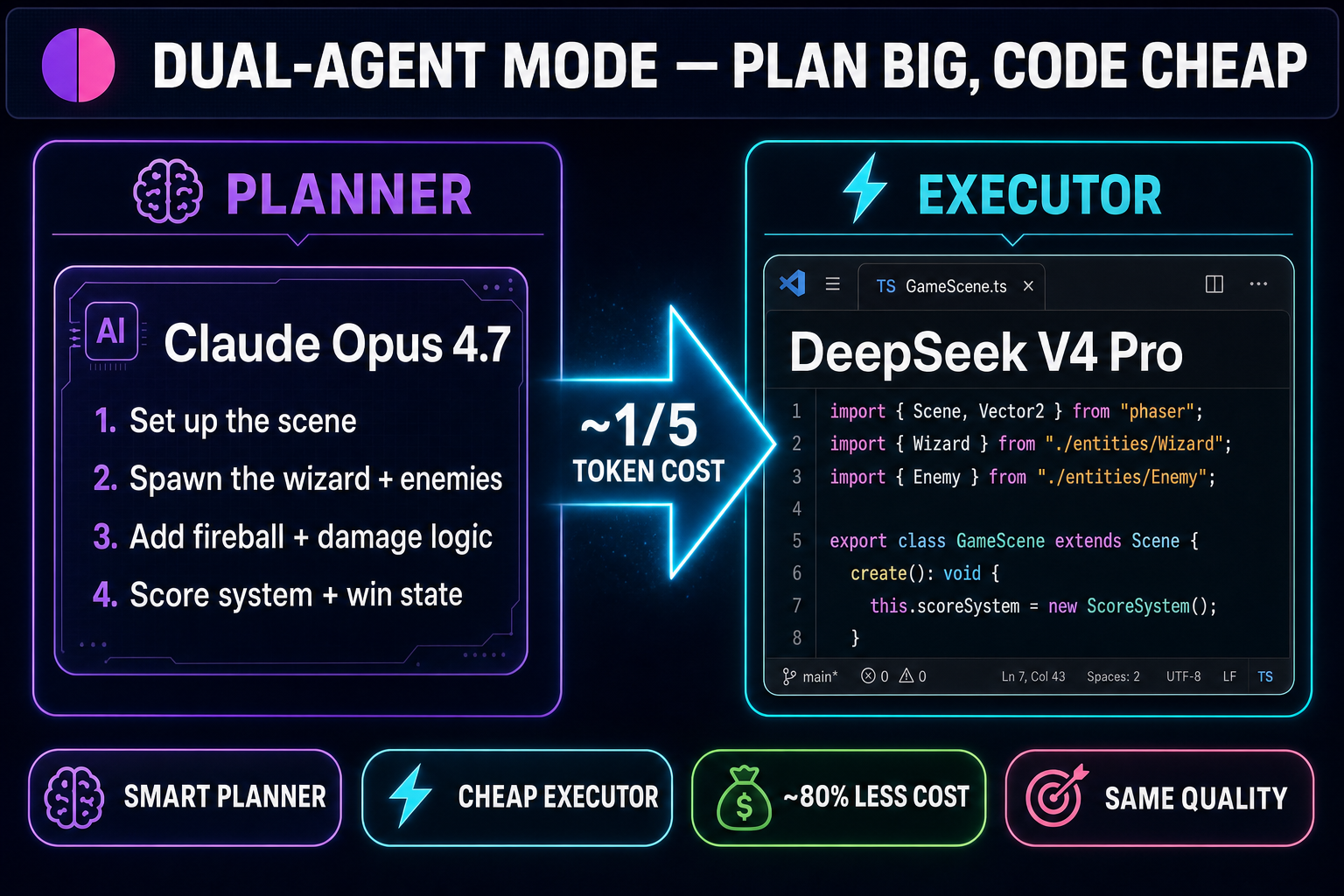

This is where WizardGenie’s dual-agent mode matters. A “Planner” model — typically a top-tier reasoner like Claude Opus 4.7 or GPT-5.5 — breaks your idea into a numbered plan. A separate “Executor” model — usually something cheap and fast like DeepSeek V4 Pro or Kimi K2.5 — actually writes the code, file by file, against that plan. The expensive brain only thinks; the cheap brain only types. That split typically runs at roughly a fifth of the token cost of using one frontier model for everything, and the final output quality holds up because the architectural decisions live in the plan, not in the typing.

If your trial allotment runs low, switch to your own API key in settings and you keep going at near-zero marginal cost.