Vibe coding is the practice of building software by describing what you want in natural language and steering an AI agent toward it through feel — not specs. The phrase started as a joke and ended up describing the thing most indie game developers actually do now. This is what vibe coding is, why it’s eating indie game dev specifically, and how a vibe-coding session for a real playable game actually unfolds.

What vibe coding actually is

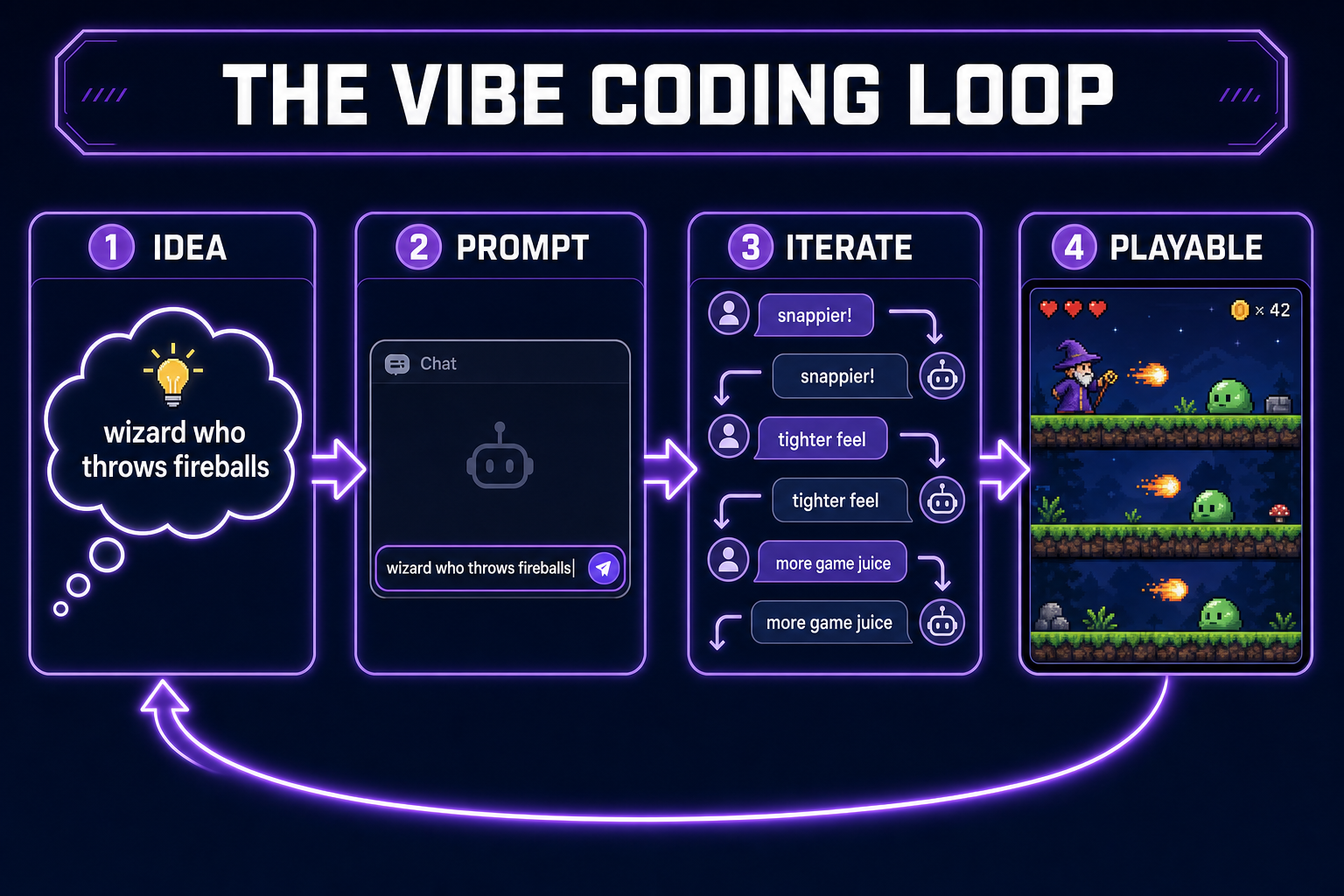

- Vibe coding is iterating on software through natural-language prompts and feel-based steering, with an AI agent doing most of the typing.

- The agent owns the implementation. You own taste, direction, and stop-conditions.

- It’s eating indie game dev first because games are the workload where “does it feel right?” is more useful than a unit test.

- Done well, you ship a real, playable game in an afternoon. Done badly, you produce a pile of half-broken scenes you can’t debug.

- The right tool for vibe coding a game in 2026 is something with a tight prompt → preview → feedback loop. We built WizardGenie for exactly this — described here.

Where vibe coding came from (and why the name stuck)

The phrase “vibe coding” started circulating in early 2025 as developers noticed that long sessions with frontier AI agents felt less like programming and more like jazz. You aren’t writing the code. You aren’t even fully specifying it. You’re nudging the agent — “snappier”, “tighter”, “feels too floaty” — and the agent translates that into actual code changes.

The phrase landed because the description was honest. The traditional software-engineering frame — gather requirements, write specs, design, implement — doesn’t match what you’re doing. You’re doing something closer to taste-based iteration on a black-box implementation. That’s the vibe in vibe coding: a feedback signal that doesn’t need to be formal to be useful.

Critics initially treated it as a slur. The implication was supposed to be that the practitioner doesn’t actually understand the code — that vibe coding produces software a junior engineer can’t maintain. That criticism is real, but it isn’t the death blow people expected. It’s a constraint on what vibe coding is good for, not a refutation that it works.

Vibe coding vs. spec-then-code (and why the old way doesn’t fit games)

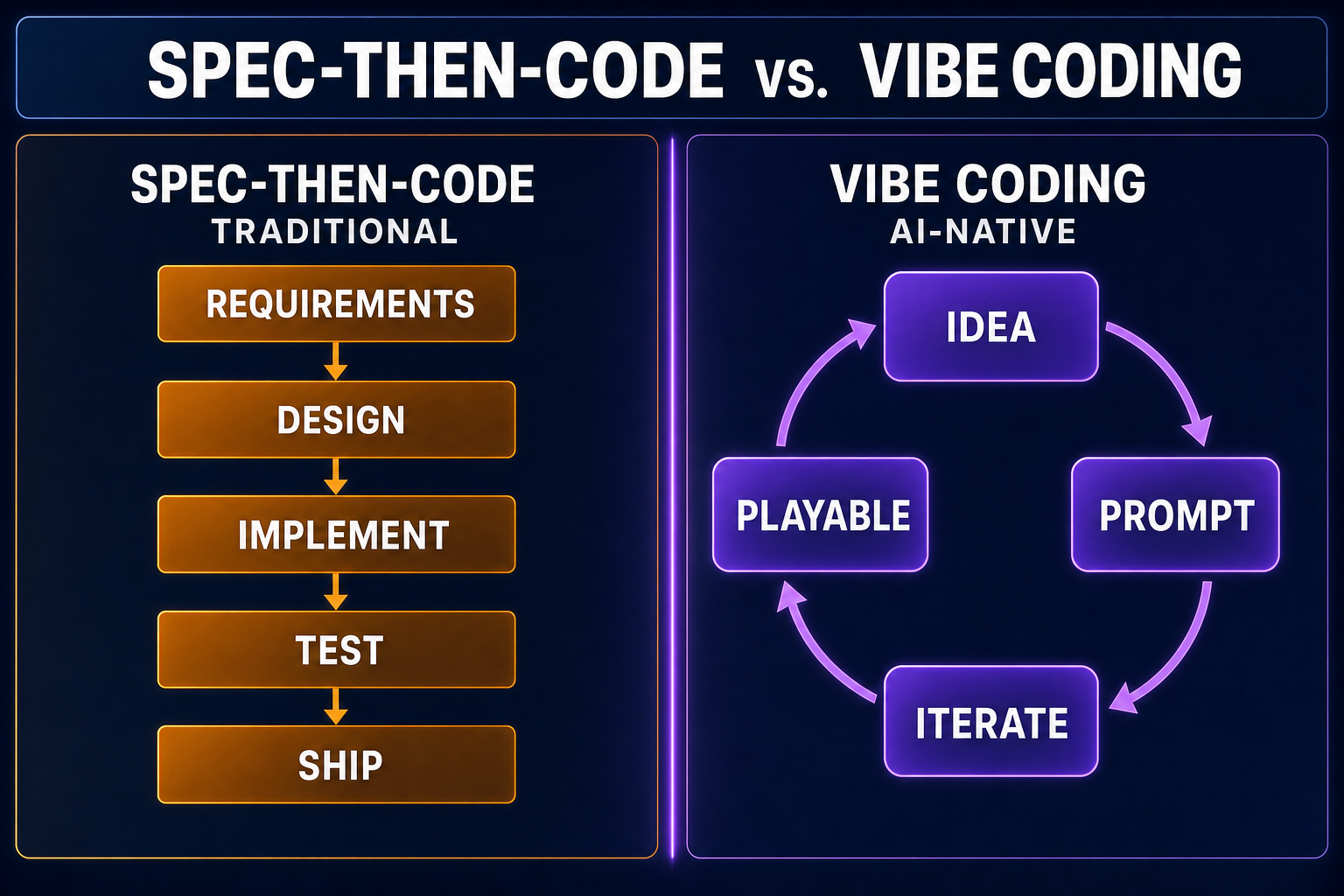

Traditional software engineering is built on the assumption that the requirements can be made explicit before implementation. Specs first, code second. Tests verify the spec. It works because most software has external definitions of “correct” — a tax calculator that returns the wrong number is broken; an inventory system that loses items is broken.

Games have no equivalent. “Correct” is replaced by “fun”, and fun has no specification. You can describe target outcomes (“the player should feel powerful when they pick up the fire-rune”), but you can’t write a unit test that proves you got there. The only verification is that you play it and it feels good. That makes the spec-first frame actively unhelpful: the spec you’d write before the first prototype is wrong because you don’t know yet what feels right.

This is why vibe coding hits indie game dev hardest. The traditional discipline says “design before you code.” Game design has spent fifty years admitting that doesn’t work — every studio runs prototypes early and iterates on game-feel. Vibe coding just compresses the prototype-iterate loop from weeks to minutes by letting an AI agent handle the typing.

The anatomy of a real vibe-coding session

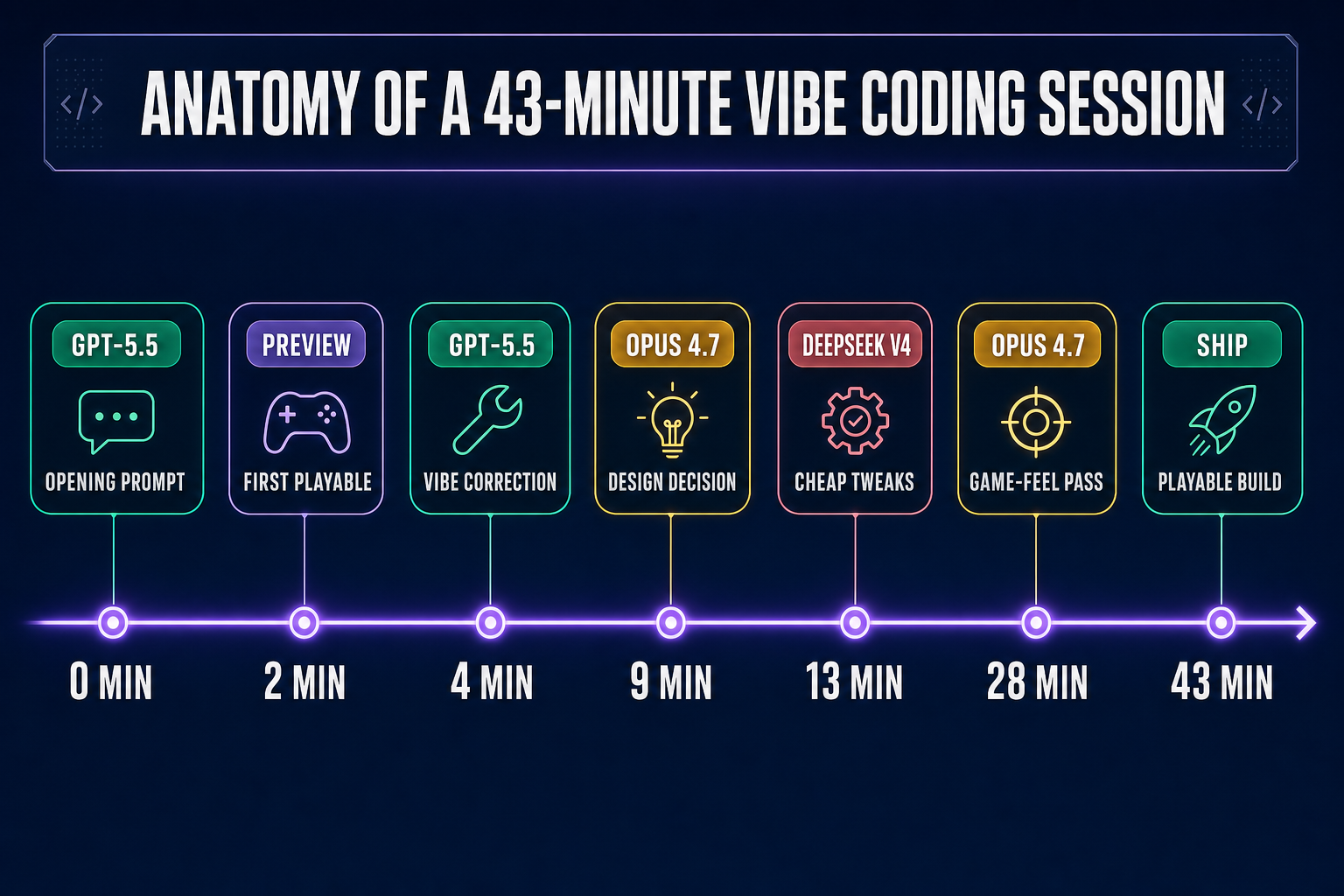

What does an actual vibe-coding session look like for a small game? Here’s a session we ran in WizardGenie targeting a 2D platformer with magic. Forty-three minutes start to finish, ending with a playable build.

- Minute 0 — opening prompt: “Build a 2D platformer in Phaser 3 with a wizard who throws fireballs. Three platforms, a death pit, a score counter.” Model: GPT-5.5 (sharp at scaffolding).

- Minute 2 — first playable: Browser preview shows the wizard, the platforms, and a working fireball. The slimes are too big and the fireballs feel weak.

- Minute 4 — vibe correction: “Fireballs feel weak. Make them faster and add a tiny screen shake on hit.” Agent edits the player controller and adds a Phaser camera shake. Done in one turn.

- Minute 9 — direction shift: “Actually, what if the fireballs ricochet off platforms once?” Bigger change. Switch to Claude Opus 4.7 because the bouncing logic touches the physics body and needs careful reasoning.

- Minute 13 — back to fast iteration: Swap to DeepSeek V4 Pro for a sequence of small tweaks: jump height, gravity, slime damage values. Cheap, fast, no design decisions.

- Minute 28 — game-feel pass: “It’s fine but it’s not fun. The wizard’s movement feels glued to the ground.” Back to Opus to diagnose. It identifies acceleration is too high and adds a small coyote-time window.

- Minute 43 — ship: Final tweaks to the score display and a fireworks effect on level complete. Build is playable, fun, and roughly 800 lines.

What’s worth noticing: the spec for the finished game does not exist anywhere. There is no design document. The closest thing is the chat history. That’s the load-bearing observation about vibe coding — the design is co-authored with the agent in real time, encoded in the sequence of nudges, and visible only by reading the transcript backwards.