Most one-click image-to-pixel-art converters do the same wrong thing: they take a high-resolution input, downscale it to a tiny grid with bicubic resampling, and call it pixel art. The result reads as a low-resolution photo, not a sprite. Real pixel art has a tiny fixed palette, hard pixel edges, and a transparent background ready to drop into a game engine. Getting all three from a source image takes a real palette quantizer, a dither choice, and an edge-cleanup pass — not a downscale. This guide walks the five-minute pipeline that actually produces game-ready sprites, the math behind each step, and the worked walkthrough inside Sorceress True Pixel.

The image-to-pixel-art problem nobody admits

An image is a continuous-tone surface — thousands of subtle colour gradients across every visible pixel. A pixel-art sprite is the opposite: a small grid of cells, each one snapped to a value in a tiny fixed palette, designed to read clearly at sprite resolution and to compress losslessly into the engine's asset bundle. Going from one to the other is not a resize. It is a colour quantization problem — the same mathematical job that GIF encoders, indexed-PNG writers, and old-school graphics drivers solved when displays could only show 256 colours at a time.

The naive image-to-pixel-art converter skips that step. It downsamples to a small grid, stretches the result back up with nearest-neighbour, and ships it. The pixels look square, the visual cue says pixel art, but every cell is still picking from millions of possible RGB values. Two adjacent cells that look the same colour to the eye are actually different by a few RGB units, and that difference makes the sprite read as a thumbnail of a photograph. It does not read as a sprite. The fix is to limit the palette before the export, by snapping every pixel to its nearest neighbour in a deliberately tiny set — sixteen colours, thirty-two, or whichever count matches the look you want. That snap is the entire game.

The other thing the naive converter skips is the background. A solid-colour background gets carried through as opaque pixels in the export, which lands in a game engine as an opaque rectangle behind every sprite. The correct workflow either chroma-keys a uniform background out at the source, or runs a model-driven background removal step before the quantization. Both paths produce a transparent PNG; both are necessary because game engines expect alpha, not "the white pixels are background, trust me."

The five-minute pipeline at a glance

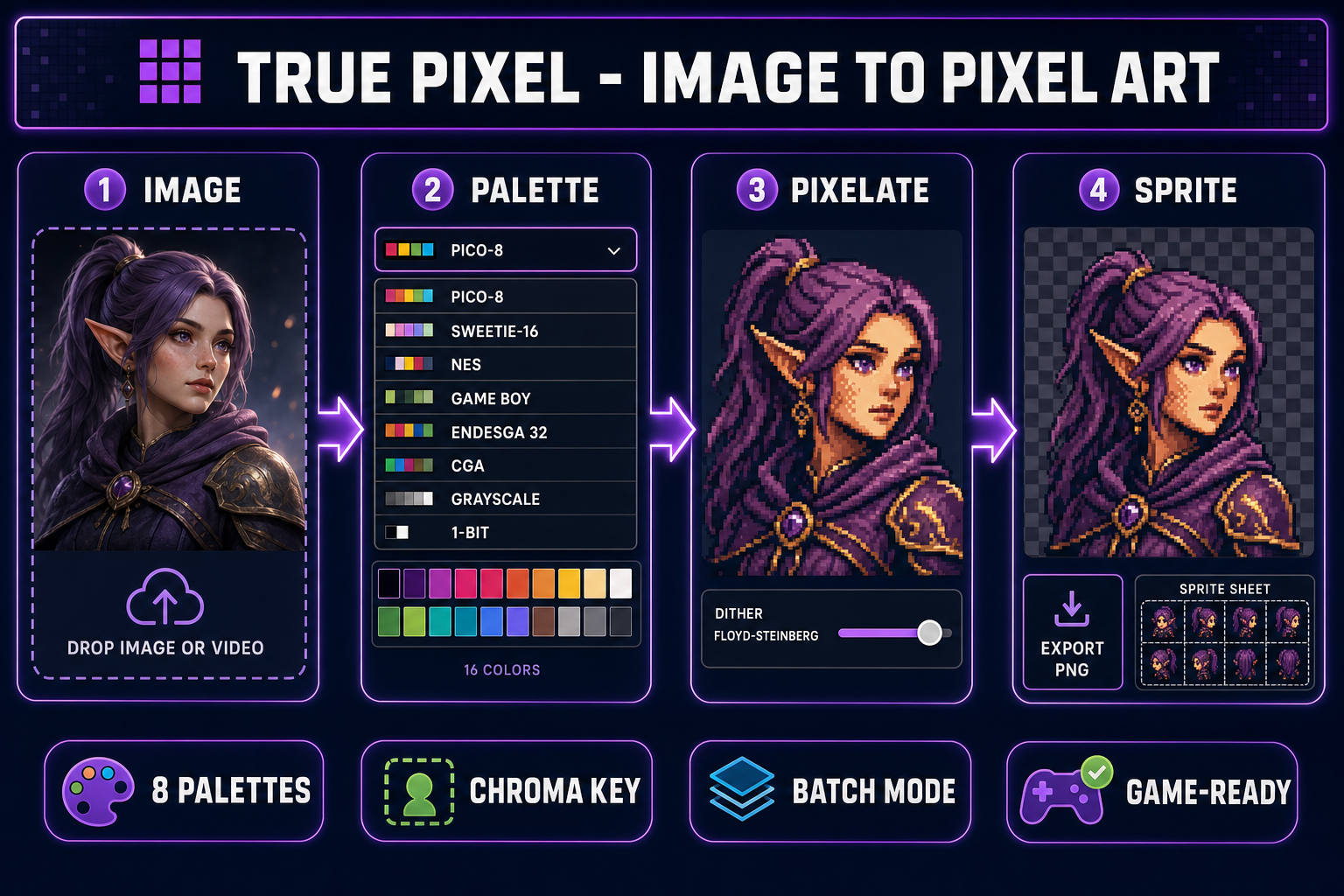

The full image-to-pixel-art conversion pipeline, in order, regardless of which tool you use:

- Source: a high-resolution image (AI render, photograph, or hand drawing). Bigger source is better — the cluster algorithm needs samples to average across.

- Background: chroma-key a uniform background, or run a model background remover for cluttered or photographic sources.

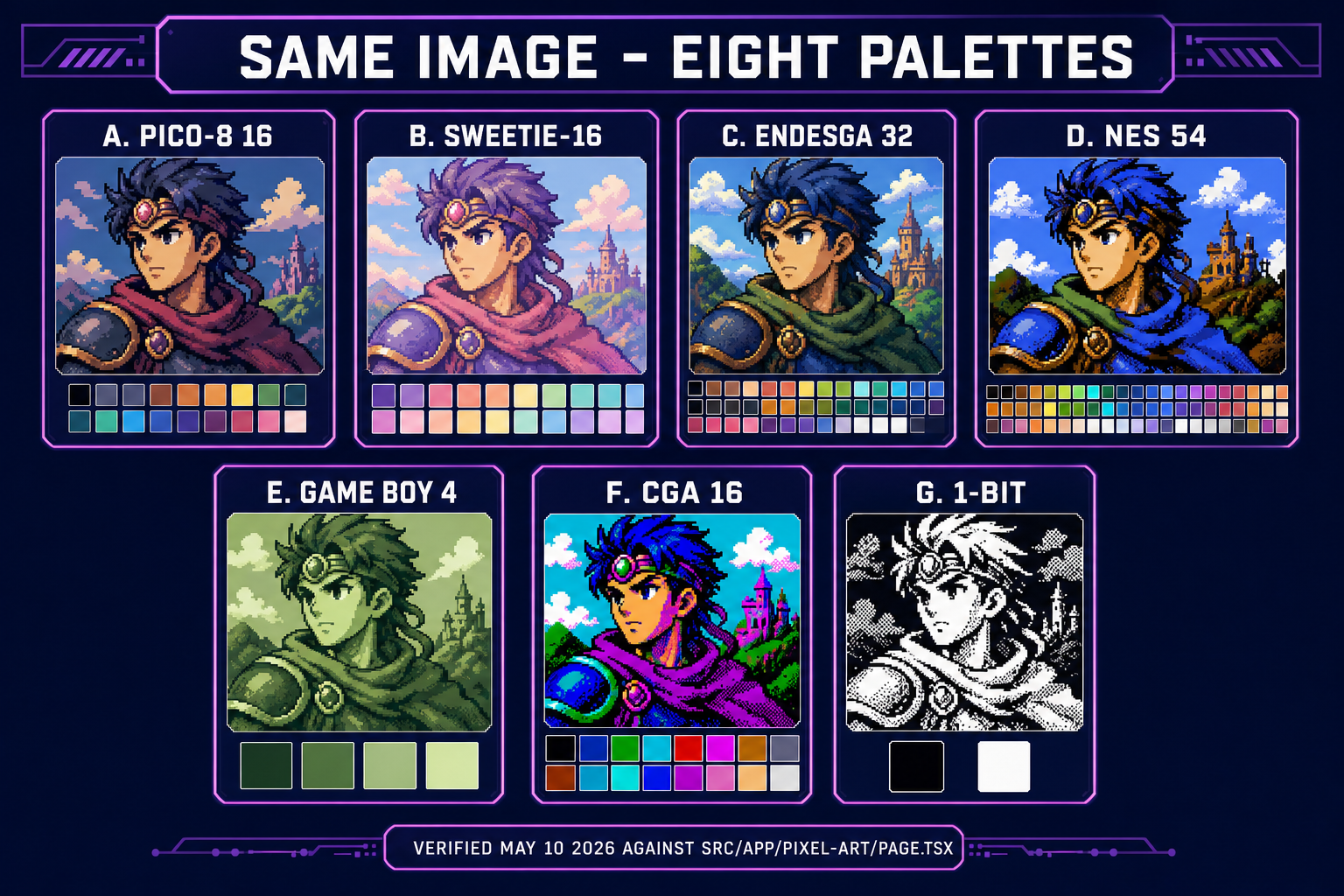

- Palette: pick a preset (PICO-8, SWEETIE-16, NES, Game Boy, Endesga 32, CGA, Grayscale, 1-Bit) or extract a custom palette from the source itself with k-means clustering.

- Quantize: snap every pixel to the nearest palette colour. Optionally diffuse the quantization error to neighbouring pixels with Floyd-Steinberg or Bayer ordered dither.

- Export: write a transparent PNG at the target sprite resolution, optionally upscaled with nearest-neighbour for display.

True Pixel runs all five in the browser. The interesting design choice is that it runs the palette quantization at the source resolution, not at the target sprite resolution. Doing the math at the larger size lets render noise from AI-generated source images average out across each cluster before the downsample. The downsample then takes the already-quantized pixels and averages them into the target grid, which produces sharper sprite edges than any "downscale-then-quantize" tool.

What a real palette quantizer does (and why it matters)

The palette-quantization step is where every image-to-pixel-art converter either succeeds or falls apart. The job is to take an image with thousands of distinct colours and find the small set of palette colours that minimizes the visible error after every pixel is snapped to its nearest neighbour. This is a k-means clustering problem in three-dimensional colour space — each pixel is a point in (R, G, B), each palette colour is the centroid of a cluster of nearby points, and the algorithm iteratively reassigns pixels to clusters and re-centres the cluster until the assignment stops changing.

The detail that matters: which colour space the algorithm runs in. Naive Euclidean distance in raw sRGB treats green and red and blue as equally important, which the human eye does not. The eye is dramatically more sensitive to green — a one-unit shift in green is more visible than a one-unit shift in red or blue. True Pixel uses a perceptually-weighted distance metric (a weighted Euclidean variant in sRGB) so the cluster centres land closer to where the human eye reads the colour boundaries. This is why the same source image quantized through a perceptual quantizer reads as cleaner pixel art than the same image quantized through a raw-RGB quantizer — the cluster centres are placed where the eye sees boundaries, not where the maths spreads them evenly.

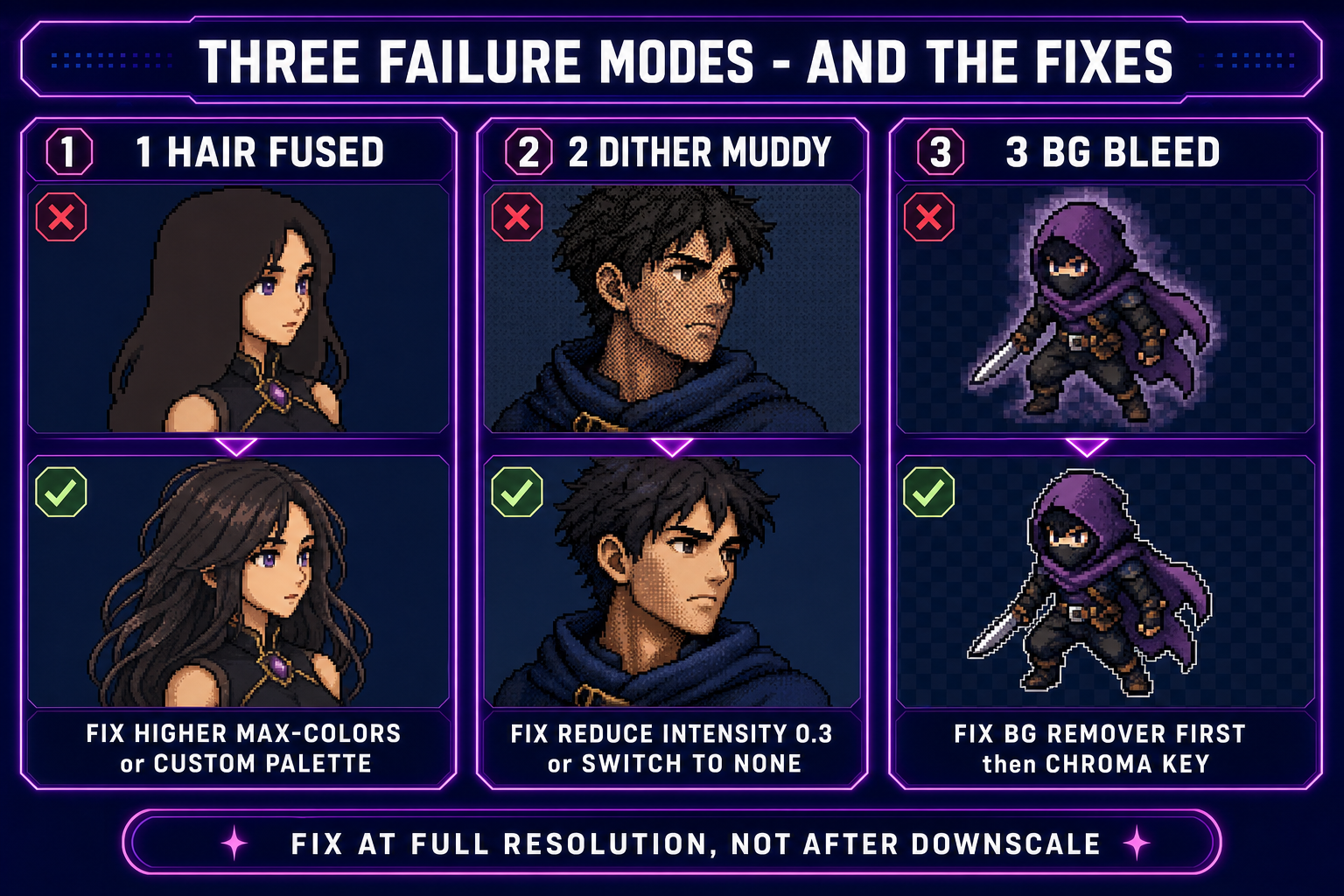

The other knob is whether to diffuse the quantization error to neighbouring pixels. Without dither, every pixel snaps cleanly to its nearest palette colour and the result has banding in continuous-tone regions (skin, sky, smoke). With dither, the difference between the original colour and the snapped palette colour is spread across nearby pixels, which trades banding for a checkerboard or stippled texture. The two practical algorithms in True Pixel:

- Floyd-Steinberg — a serial error-diffusion algorithm published in 1976 that pushes 7/16 of the residual error to the right neighbour, 3/16 to the bottom-left, 5/16 directly below, and 1/16 to the bottom-right. The result is the classic stippled pixel-art texture you see in old shareware games. Wikipedia has the full coefficient breakdown.

- Ordered (Bayer) — a parallel algorithm that uses a fixed threshold matrix tiled across the image. Faster than Floyd-Steinberg, produces a more visible crosshatch pattern, and works well when you want a deliberate retro shimmer. The Wikipedia ordered-dithering article covers the matrix-construction recursion if you want the math.

- None — hard quantization with no error spread. The right pick when the palette is large (32+ colours) and the source is already low-noise, or when the look you want is the sharper, posterized aesthetic of modern indie pixel art rather than the textured aesthetic of the 1990s.

The Dither Intensity slider controls how much of the residual error gets pushed forward. At 1.0 the algorithm distributes the full error; at 0.3 only a third of it. Lower intensity = fewer dither artefacts, more banding; higher intensity = more dither texture, less banding. The right setting depends on the source: photographic sources with skin tones usually want 0.5; clean AI renders with flat regions usually want 0.2 or none.

Step-by-step in True Pixel: three source types, three settings

Open True Pixel. The drop-zone in the centre of the page accepts images, video files, and entire batch folders. The right rail has the palette picker, the dither dropdown, the intensity slider, the chroma-key toggle, the edge-cleanup toggle, and the target-resolution slider.

The settings that actually matter change with the source type. Three worked recipes that cover most game-dev use cases:

- AI render → pixel sprite. Source is a clean stylized character image generated by AI Image Gen or another image model. Background is usually a flat colour or transparent. Settings: palette PICO-8 16 (or SWEETIE-16 for softer hues), dither none or ordered at 0.2, chroma-key on with the picker set to the background colour at tolerance 60, target resolution 64x64 for a character or 128x128 for a hero portrait. The result is a clean pixel sprite with no halo and no banding.

- Photograph → pixel portrait. Source is a real photo with cluttered background. Settings: run BG Remover first to get a transparent cutout, then drop into True Pixel. Palette Endesga 32 for skin-tone range, dither Floyd-Steinberg at 0.5 to handle the smooth gradients, target 96x96 for a recognisable portrait. The result reads as a stylized version of the photo, not a low-resolution thumbnail.

- Hand drawing → pixel sprite. Source is a sketch or illustration with line work and minimal shading. Settings: palette 1-Bit or Grayscale 8 if the drawing is monochrome; PICO-8 if it is coloured. Dither none — line art has no continuous tones to diffuse. Edge cleanup on at 1px to thicken the lines into readable pixel strokes. Target resolution 32x32 for icons, 64x64 for characters.

Hit Convert. The pipeline runs entirely in the browser; the result appears in the preview pane within a second or two for a single image, longer for batches. Refine by adjusting the dither intensity and re-running — the cluster centres are deterministic for a given source and palette, so the only variability is in which dither artefacts the algorithm distributes where.