An AI character generator is the easiest way to design a hero, villain, NPC, or pet companion in 2026 — except for one annoying problem: the second render almost never looks like the first. Solving that — keeping the same character on-model across every angle, expression, and outfit — is the single biggest win you can make in your asset pipeline. This is the practical guide.

What an AI character generator does well in 2026

- The job of an AI character generator isn’t just to spit out one character — it’s to produce a consistent cast you can ship.

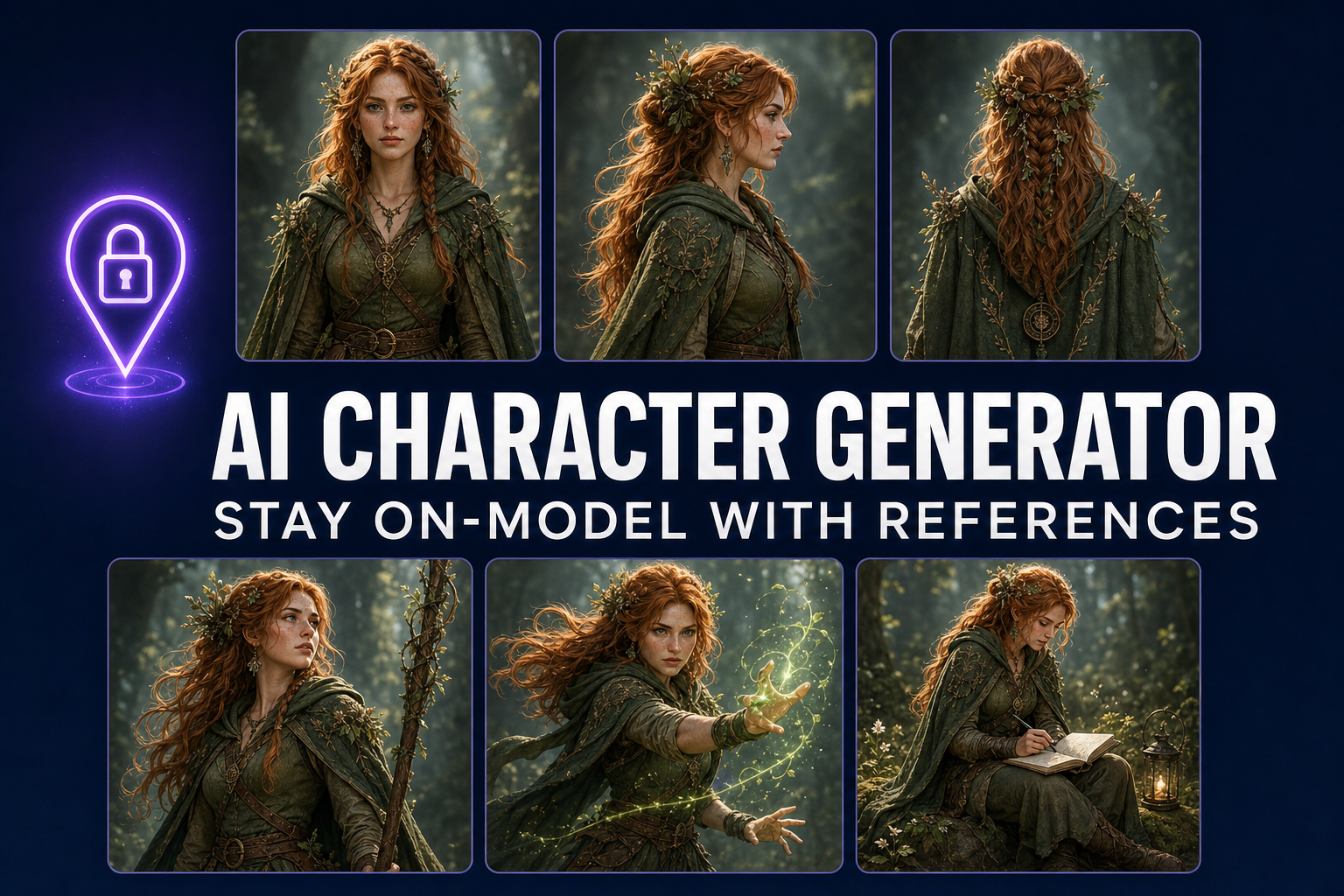

- The “on-model” problem (every render looks like a different character) is solved with reference images, not better prompts. Every flagship model in 2026 supports them.

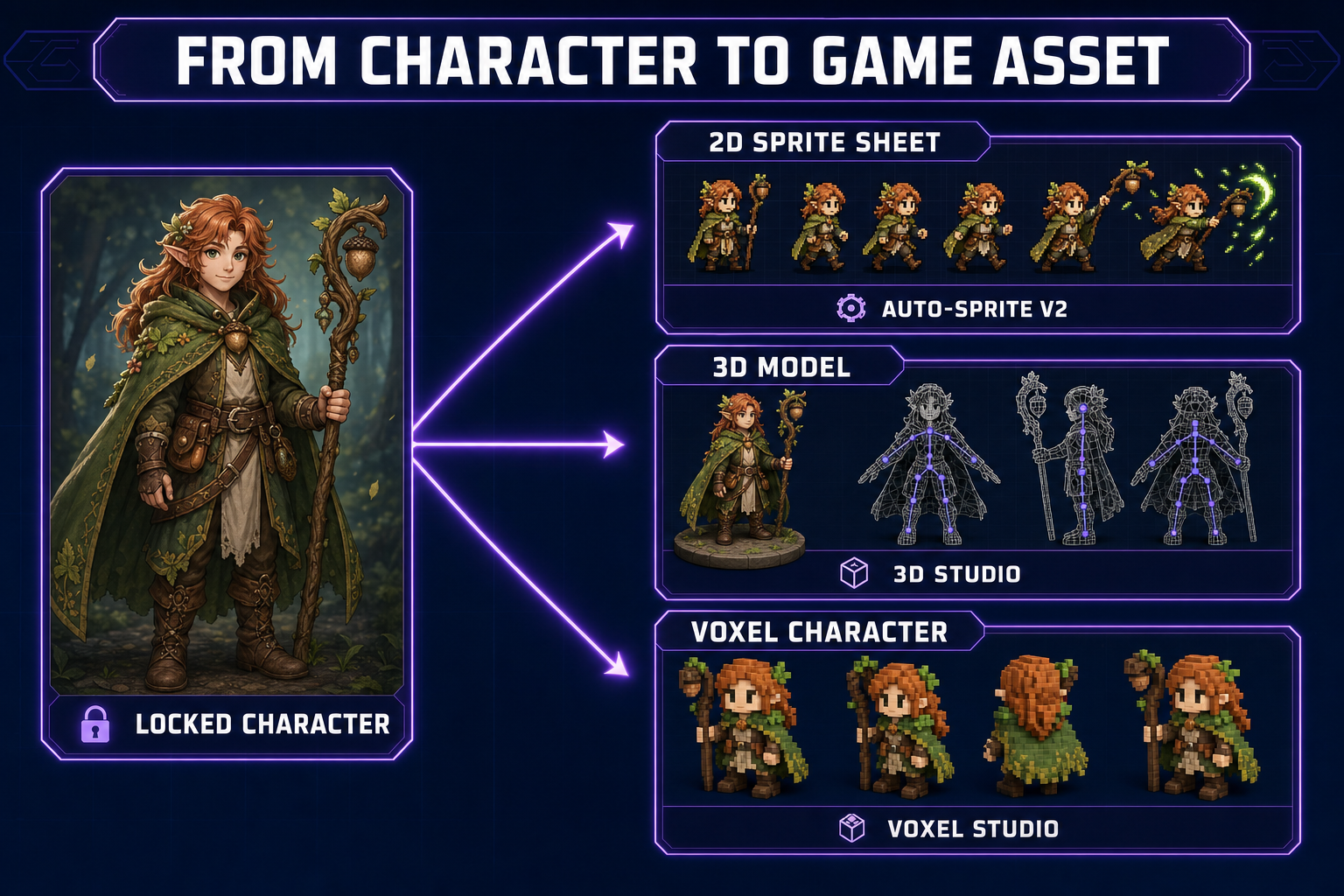

- For game-dev specifically, the right pipeline is: prompt → reference-locked turnaround → 2D sprite or 3D model → animation. Each step keeps the same character across renders.

- The model that wins for character work changes by use case. We rank all seven below.

- The fastest path: open Sorceress AI Image Gen, prompt with a reference, and lock the character in under five minutes. Then push the model sheet straight into Auto-Sprite or 3D Studio.

The “on-model” problem (and why it kills most AI character generator projects)

Here’s the failure pattern that derails almost every first attempt at a character workflow. You write a great prompt — “a stoic elven ranger with silver hair, a dark green cloak, and a bow.” The model produces a render. You love it. You prompt again for the same character running, and the silver hair is now darker, the cloak is now teal, and the elf has subtly different cheekbones. Generate twenty times and you have twenty different characters who all loosely resemble your prompt.

This is not a prompting problem. Even the most carefully constructed prompts hit the same wall: the text is too low-bandwidth to fully specify a face. There are millions of plausible elven rangers with silver hair and a green cloak. The model picks one each time. You’ll never prompt your way out of this.

The fix is to give the model an actual image as a constraint, not just text. That’s reference-image conditioning, and every flagship model in 2026 supports it natively. Once you have one canonical render of your character, every subsequent render uses it as the source-of-truth for face, hair, outfit, and color palette.

The reference-image fix (how to lock a character so it stays on-model)

The mechanic is simple. You generate one canonical render — the “hero shot” — and then attach it as a reference image for every subsequent generation. The model uses the reference for identity (face, hair, body type, signature outfit details) and the prompt for what’s changing (pose, expression, lighting, environment).

Three things to know about reference-locking:

- The reference image quality compounds. A bad hero shot produces twenty bad downstream renders. Spend extra time on the hero shot specifically — generate ten variations and pick the one with the cleanest face and the most distinctive silhouette. That investment pays off across hundreds of subsequent generations.

- Some models reference better than others. Nano Banana Pro and GPT Image 2 are the strongest at character identity preservation. Flux 2 Pro is excellent at outfits and props but sometimes drifts on faces. Z-Image Turbo is fast but less reliable for tight identity matching.

- Multiple references stack. You can attach two or three images at once — for example, your hero shot plus an outfit reference plus a style reference. The model blends all three. This is how you build characters with specific costumes and consistent rendering treatments simultaneously.

Sorceress AI Image Gen treats reference images as first-class. You can drag in any image — including outputs from a previous generation — and pin them as references for the next batch. Multiple references, accent prompts per reference, and saved collections for grouping a character’s full reference set.

Step-by-step: build a hero character that stays on-model

Here’s the actual playbook we run. Total time: eight to twelve minutes for a complete locked-down character.

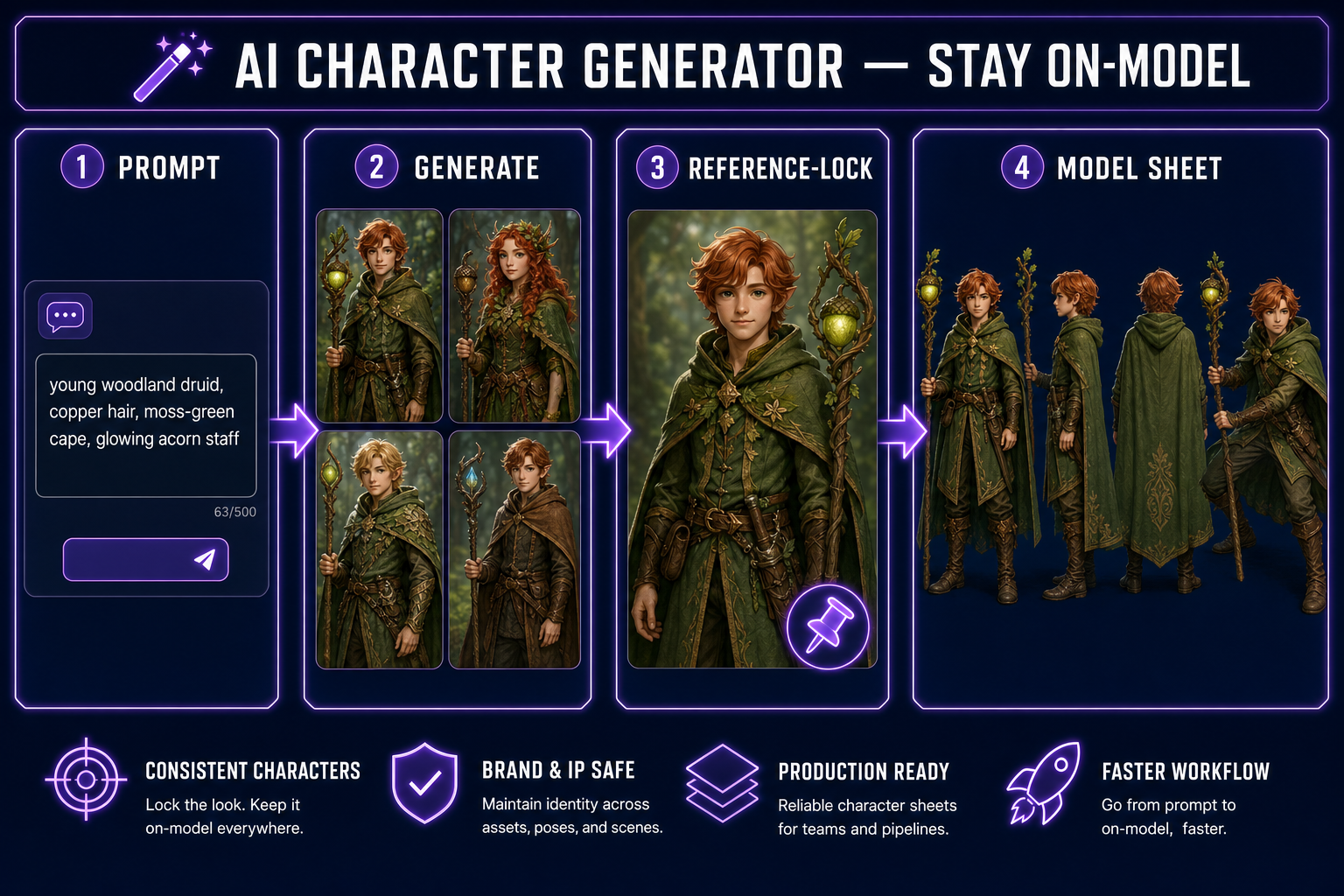

- Write the spec. Two or three sentences describing the character — species/type, distinctive features, costume, color palette, mood. Keep it narrow. “A young woodland druid with copper hair, freckles, a moss-green cape with a leaf-patterned hem, and a wooden staff topped with a glowing acorn.” Pin this as your spec — every prompt going forward references it.

- Generate the hero shot. Prompt: spec + “front-facing portrait, neutral pose, even studio lighting, full body visible, white background.” Pick a strong model — Nano Banana Pro or GPT Image 2 are both excellent. Generate eight variants. Pick the one with the cleanest face, sharpest silhouette, and most distinctive features. This is your reference.

- Build the model sheet. Attach the hero shot as a reference. Run four more generations with the same spec, varying only the pose: “side profile facing right”, “back view”, “three-quarter view”, “action pose mid-step”. You now have a five-view turnaround. This is what professional studios call a “model sheet” — the canonical reference document an artist would use to draw the same character in any context.

- Build expression and outfit variants. Same approach: keep the hero shot as reference, change one variable per prompt. “Smiling”, “angry”, “casting a spell”, “winter coat outfit”. Save these as a collection labeled with the character’s name. You’re building a character bible the AI can stay consistent against.

- Test stress poses. The acid test for on-model: prompt for something extreme. “Falling backwards mid-air, panicked expression, staff flying away.” If the character is still recognizable, you’ve successfully locked it. If not, the hero shot wasn’t strong enough — go back to step 2 and pick a different one.

The whole pipeline lives in one panel in AI Image Gen — references, collections, batch generation, and model switching are all on the same surface. There is no upload-export-reupload step.