A 2026 PBR material is a stack of six images, not one. Albedo (the surface color), normal (which way the surface is tilted at every point), roughness (how shiny each pixel is), metallic (which pixels reflect like metal), ambient occlusion (where shadows pool in crevices), and emissive (which pixels glow on their own). Every modern engine — Unity, Unreal, Godot, Three.js — expects all six maps stacked, aligned, and correctly authored. The traditional pipeline to produce that stack is Substance Painter, Photoshop, and a Blender bake pass. The 2026 alternative collapses the whole stack into one browser tab.

How an AI PBR texture generator turns one photo into a material

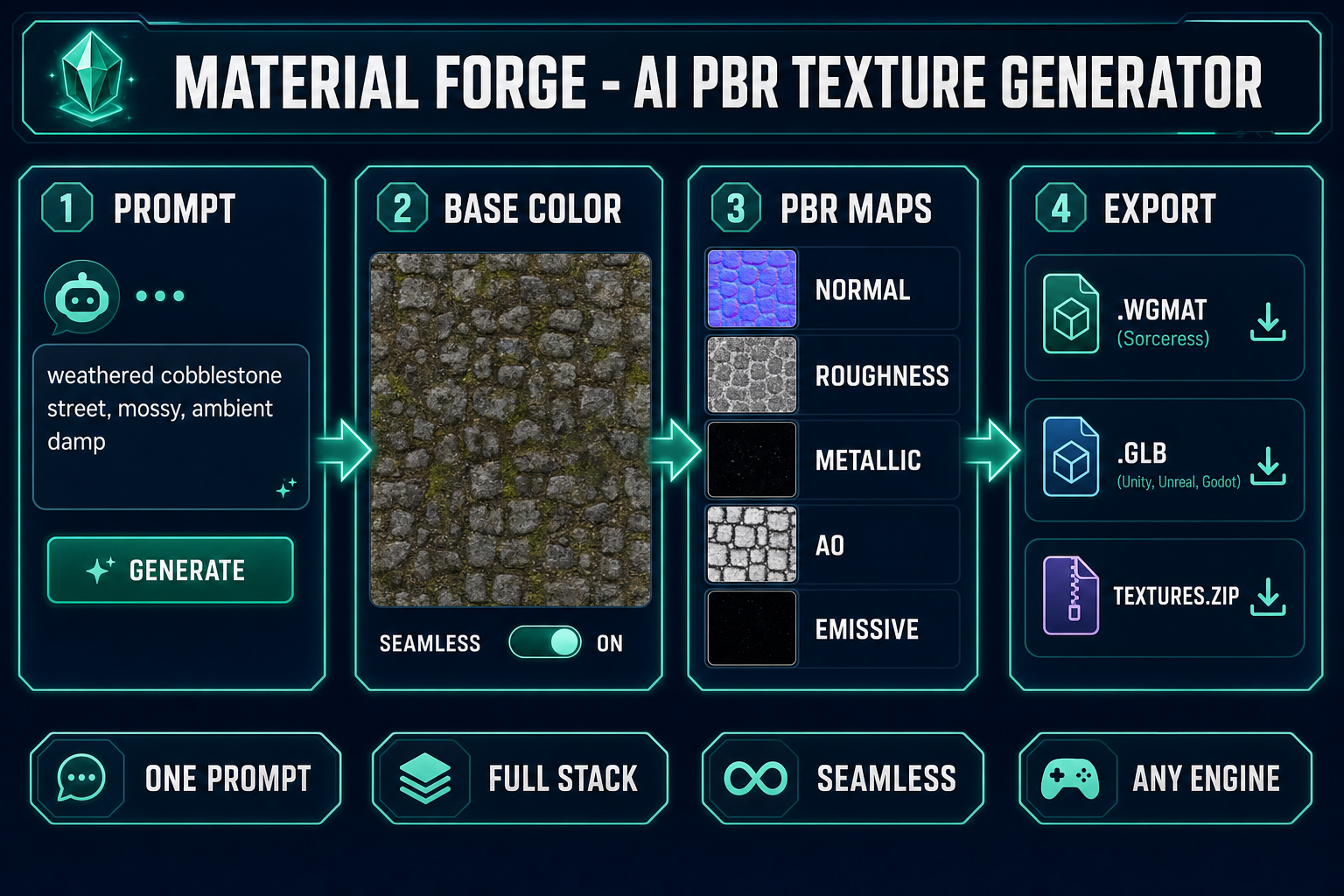

- Open Material Forge in your browser. Type a description (weathered cobblestone street, mossy, ambient damp) or drop a reference photo of the surface you want.

- The texture-generation pass produces a seamless base color (albedo) map using the selected image model — Nano Banana 2 by default, with GPT Image 2, Seedream 5 Lite, Flux 2 Pro, Z-Image Turbo, and Grok Imagine all available in the same picker (verified May 9, 2026 against

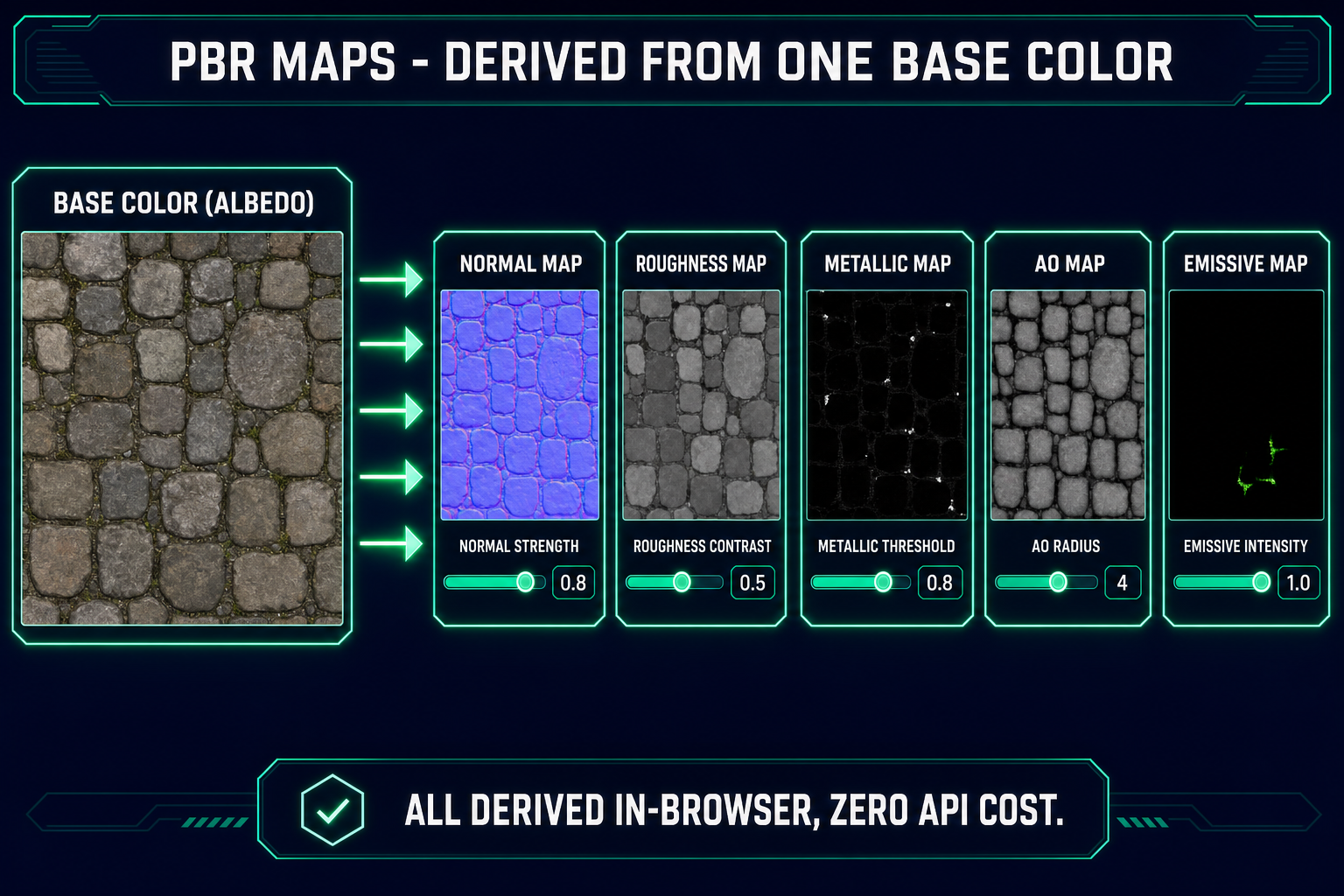

src/lib/models.ts). - The derive pass runs in-browser using canvas-based image processing. From the single base color it produces the normal, roughness, metallic, AO, and emissive maps. Zero API cost; the work happens locally on your GPU.

- The live 3D preview renders the full material on a sphere, cube, torus, plane, cylinder, or repeating tile. Sliders for metallic, roughness, normal intensity, AO, clearcoat, sheen, IOR, and transmission tune the material as a physically-based whole.

- Export the result as a WGMAT bundle (one-click drop into a WizardGenie project), a GLB material preview (Unity, Unreal, Godot, Three.js, Babylon.js, Blender), or a raw texture pack zip (six PNG maps wired up by your own pipeline).

From blank tab to a full PBR material on disk takes roughly two to five minutes, the bulk of which is the AI generation step waiting for the image model to return. Everything after the base color is local computation that finishes in seconds.

What “PBR” actually means (and why one photo is not a PBR material)

Physically based rendering is the lighting model every modern game engine uses to make surfaces look correct under real lighting conditions instead of being painted to look correct under one specific light. PBR is a shading framework grounded in a bidirectional reflectance distribution function — a mathematical model of how a surface scatters incoming light back into the world. To evaluate that BRDF the engine needs to know, at every point on the surface, six things:

- Base color (albedo) — the underlying color of the surface, with no lighting baked in. A red brick is red even in shadow; that color is the albedo.

- Normal — the surface direction at every pixel, encoded in the RGB channels as a vector. Normal mapping is what makes a flat polygon read as a bumpy stone wall; the normal map tells the shader to pretend the polygon has the geometry of the high-frequency detail.

- Roughness — how broadly the surface scatters reflected light. Polished marble is low roughness; raw concrete is high roughness.

- Metallic — whether each pixel obeys metal-like or dielectric-like reflection physics. Metal pixels colorize their reflections; dielectric pixels do not.

- Ambient occlusion (AO) — ambient occlusion bakes the shadowing that crevices and concavities produce under diffuse skylight, so the engine does not have to compute it at runtime.

- Emissive — pixels that emit light independent of the lighting in the scene. Lava cracks, neon glyphs, runes, glowing buttons.

A photograph of a cobblestone street is not a PBR material. It is exactly one of those six channels — the base color — and even that channel has shadows and lighting baked in that should not be there. To go from photograph to material you need a tool that knows the shape of all six maps and how to derive the missing five from the one you have. That is the job an AI PBR texture generator does.

Step 1 — Describe or upload your source surface

Open Material Forge and you land on the gallery view with a chat panel on the left, a live 3D preview in the center, and a material inspector on the right. The chat panel is the Material Assistant — a GPT-5 Mini agent that translates natural language into PBR property changes (too shiny, more bumpy, tighter tile, warmer color, less metal) and orchestrates the texture-generation pass.

Two ways in. Type a prompt or drop a reference. The patterns that consistently produce clean, riggable surfaces:

weathered cobblestone street, damp, mossy in the cracks, ambient grey

rough sandstone block, sun-bleached, tightly tessellated

hammered iron plate armor, dark patina, rivets at the corners

woven linen fabric, ivory cream, plain weave, slightly translucent

volcanic basalt slab, cooling fissures, dim red emissive in cracksThe literal phrase seamless tiling is not necessary in the prompt because Material Forge has a Seamless toggle that prepends the appropriate sampler instructions for the chosen image model. Toggling Seamless ON before generating produces a base color that wraps cleanly at the borders — suitable for tiling on terrain, walls, fabric, brick, and any other repeating surface.

If you have a real-world reference — a photograph of the actual leather, the actual stone, the actual tile pattern you want — drop it in the upload zone. Material Forge passes the reference to the chosen image model and the model uses it as a style and color anchor for the seamless base color.

Step 2 — Generate the seamless base color texture

The image-model picker exposes the same models you see in AI Image Gen: Nano Banana Pro, Nano Banana 2 (default for Material Forge), GPT Image 2, Seedream 5 Lite, Flux 2 Pro, Z-Image Turbo, and Grok Imagine. Pick the one whose strengths match your surface:

- Nano Banana 2 — the strong default. Tight prompt adherence, consistent style across re-rolls, fast. Produces clean tileable surfaces for stone, brick, wood, metal, fabric.

- GPT Image 2 — the right pick when the texture has fine in-image text or symbols (rune-engraved stone, painted tiles, signed metal plates). It is the only model in the panel that reliably renders dense legible text inside an image.

- Seedream 5 Lite — the right pick for stylized or uncensored material direction the others cannot produce.

- Flux 2 Pro — the right pick for photorealistic surfaces where you have a specific real-world reference and want maximum fidelity.

Click Generate. The base color appears in the preview within roughly fifteen to forty-five seconds depending on the model. Re-roll until the surface character matches what you want; the prompt, model, parameters, and any tags are saved with the material so you can reuse the exact pipeline later.