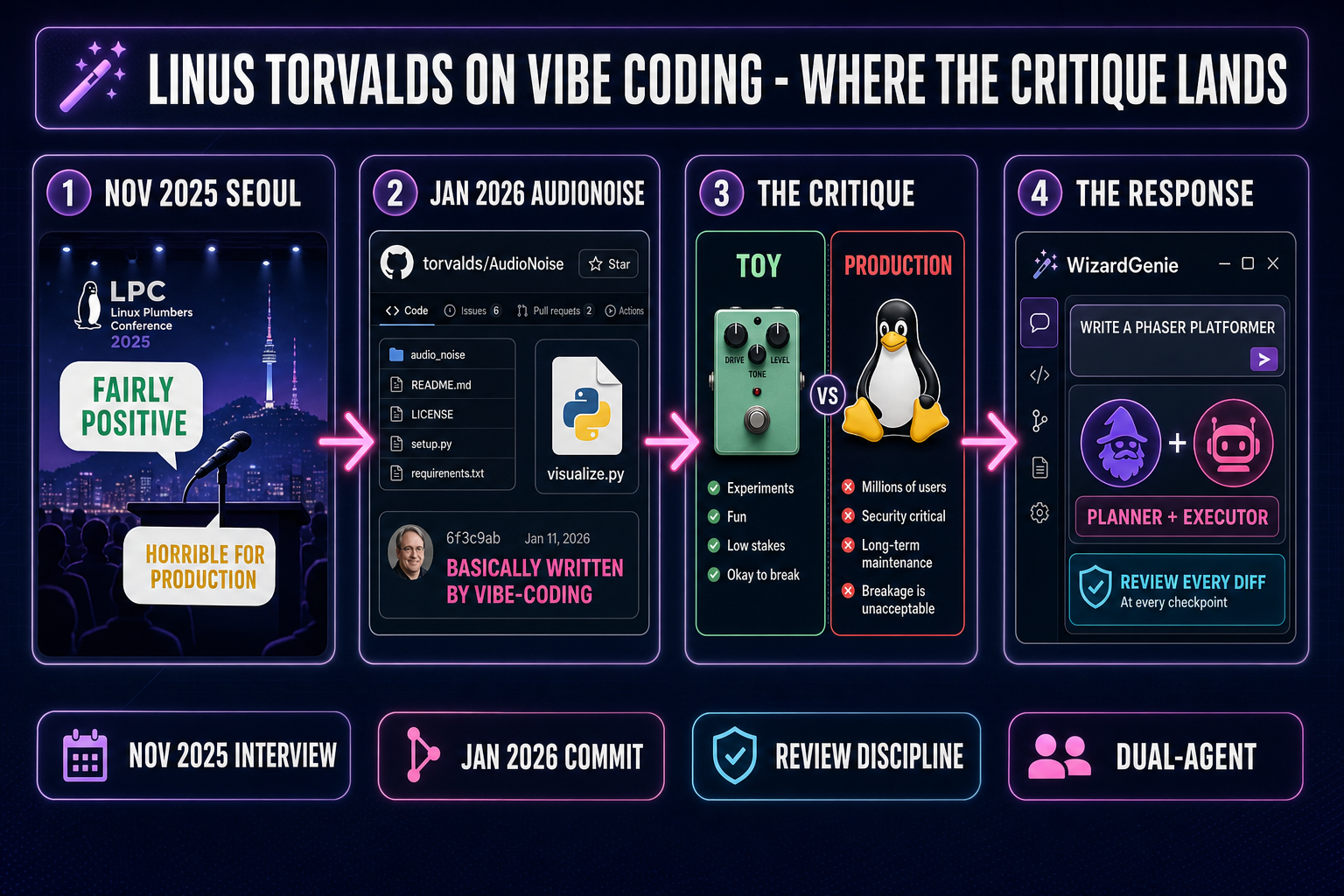

Two months separate the most-cited Linus Torvalds vibe coding moments. In November 2025, on a Seoul stage with Verizon’s Dirk Hohndel, he called vibe coding “a horrible, horrible idea from a maintenance standpoint” for production work while saying he was “fairly positive” about it for newcomers. In January 2026 he created a public GitHub repository, torvalds/AudioNoise, and committed a Python visualizer with a README that says it was “basically written by vibe-coding” using Google Antigravity. Both takes are correct on their own terms. The interesting question is what the gap between them tells engineers who actually ship AI-assisted code in 2026 — and how the WizardGenie and Sorceress Code workflows answer the maintenance critique without retreating from the productivity gain. Verified against the live torvalds/AudioNoise repository, the November 18 2025 Register write-up, and the January 13 2026 Ars Technica follow-up on May 13, 2026.

The two interviews that defined the Linus Torvalds vibe coding take

The Linus Torvalds vibe coding conversation has exactly two anchor moments and a lot of secondary commentary. Anchor one: an on-stage interview at the Linux Foundation Open Source Summit Korea in Seoul, November 2025, conducted by Verizon’s open-source chief Dirk Hohndel. The Register’s November 18 2025 write-up of that interview is the canonical source for the “horrible, horrible idea from a maintenance standpoint” quote, the “fairly positive” framing for new entrants, and the AI-as-compiler analogy that anchors his broader read on the field. Anchor two: a public GitHub repository at github.com/torvalds/AudioNoise, created January 9, 2026, in which Torvalds documents that the project’s Python visualizer tool was “basically written by vibe-coding” using Google Antigravity, the new agentic coding IDE that began life as a fork of Windsurf.

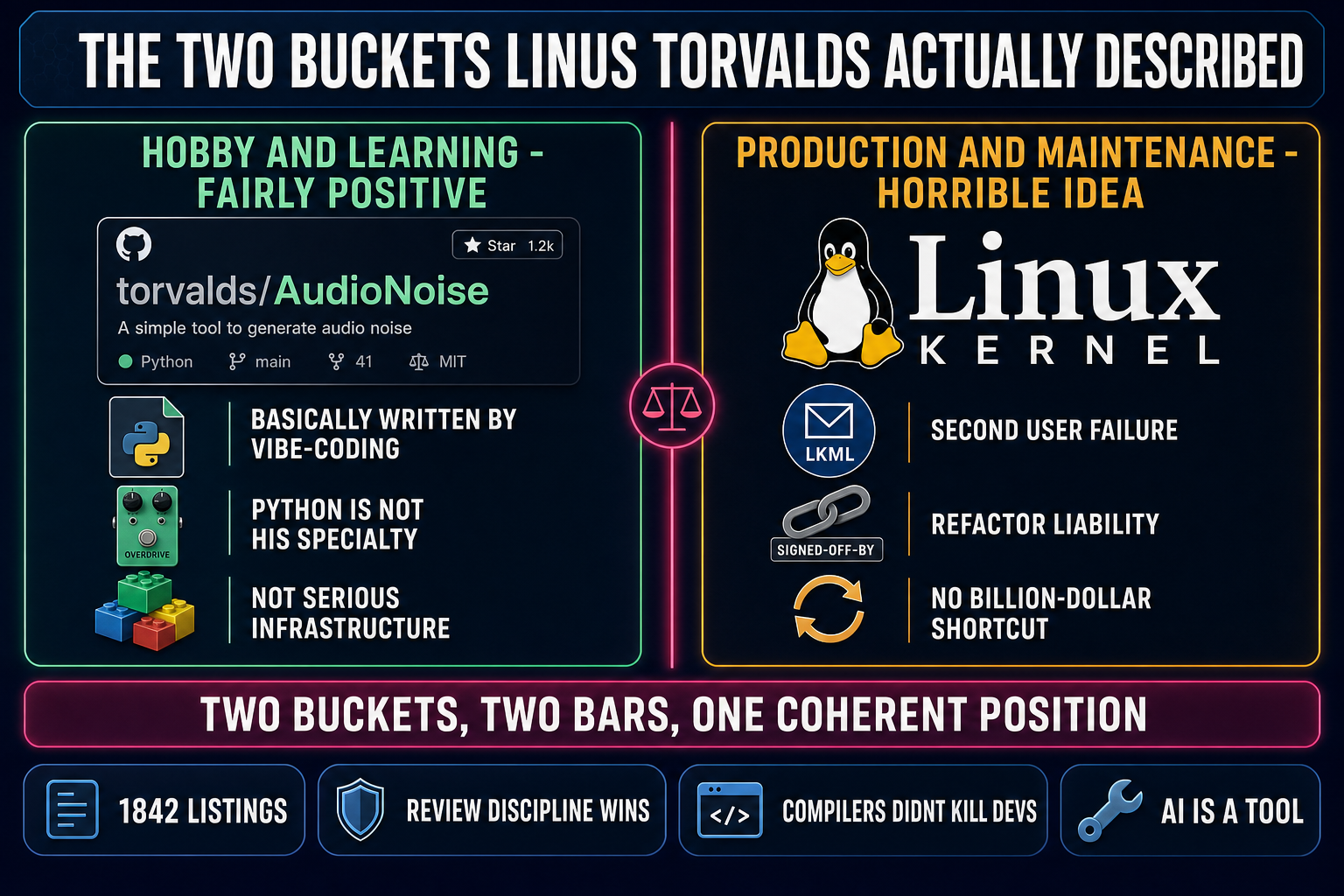

Everything in the broader Linus Torvalds vibe coding discourse hangs off those two moments. Ars Technica’s January 13 2026 follow-up traced the gap between the November critique and the January commit and argued, correctly, that the two are not contradictory. Phoronix covered the AudioNoise repo as the lead story the same week. The thread that runs through every serious read of both moments is the same one this post is going to follow: there are two buckets of code in Torvalds’ head, and the bucket determines whether vibe coding is fine or fatal.

The buckets matter because the senior-developer skepticism the role still gets — covered honestly in the vibe coding memes piece — collapses both buckets into one. Torvalds himself does not. That is the entire move worth studying.

November 2025 Seoul: “fairly positive” and “horrible idea” in one breath

Strip the headline writing away and the actual position Torvalds laid out in Seoul is precise. He said vibe coding is “a great way for people to get computers to do something that maybe they couldn’t do otherwise” — an entry point for hobbyists, students, and newcomers who would otherwise be locked out of building software at all. The same paragraph in The Register’s reporting carries the qualifier: “may be a horrible, horrible idea from a maintenance standpoint” for production code, and a separate dismissal of people who “hope to make billion-dollar companies by just using vibe coding.”

Two takes, two audiences, two code-quality bars. A learner who vibe-codes a Tetris clone to feel out the shape of game programming is operating on the toy bucket; nothing about the project will be maintained by a second engineer five years out, so the maintenance critique simply does not apply. A startup that vibe-codes its payment-processing layer and ships it to a thousand paying customers is operating on the production bucket; the maintenance critique is the entire problem statement and the prototype that worked for the first user is the liability that will surface when the fifty-first user logs in. Torvalds’ comments are coherent the moment you stop reading them as one position.

The compiler analogy in the same interview is the through-line. “AI is just another tool, the same way compilers freed people from writing assembly code by hand,” he told Hohndel. The framing is deliberately deflating — he is not predicting the end of programming, and he is not predicting that LLM agents will replace senior engineers. Compilers did not eliminate the need for engineers who understood the machine; they raised the abstraction at which engineers worked, and the engineers who did not understand what the compiler was emitting got worse code than the engineers who did. The same shape applies here, and Torvalds is saying it on the record.

January 2026: when Linus Torvalds vibe coded his own project

Two months later he proved the bucket reading himself. The AudioNoise repository is a hobby project for digital guitar-pedal effects — about 58% C, 38% Python, GPL-2.0 licensed, no production deployment, no second engineer downstream. The README is explicit: the project is “entirely a personal hobby project” and the visualizer tool was “basically written by vibe-coding” because Python is not Torvalds’ specialty — he is a C developer by habit, and the alternative to vibe coding the visualizer was, as he put it, “google and monkey-see-monkey-do” copying from forums.

The agent he used was Google Antigravity, the agentic coding IDE Google released in late 2025 as a fork of Windsurf. The choice is interesting on its own — it is a frontier-tier agent, not a toy, and Torvalds is not slumming with a budget tool to make a point. He picked the strongest available agent for a non-critical hobby tool and let it write most of the code. The repository’s commit history shows the “Merge branch ‘antigravity’” commit at 93a72563 as the canonical landing point for the visualizer.

That is the proof of bucket discipline. Torvalds did not vibe code the C audio engine; he hand-wrote that, because that is the half of the project he understands deeply enough to maintain himself. He did vibe code the Python visualization layer, because the visualizer is a throwaway tool he uses to look at signals and the cost of getting it “wrong” is exactly zero. Two buckets, two tools, one engineer who reads his own code-quality bar correctly. The engineering takeaway for vibe coders shipping in 2026 is the same: the bucket determines the workflow, not the headline.

Where the Linus Torvalds vibe coding critique actually lands

The maintenance critique is correct on its terms, and the engineers most allergic to the headline are usually the ones who agree with the substance once they read it carefully. Production code is judged on how well it survives a refactor, not on whether the first prompt produced something that compiled. Code that nobody understands well enough to extend or harden is a liability the moment a second user, a second deployment, or a third API failure shows up. That is the failure mode Torvalds is naming, and it is real.

The shape of the failure is concrete: the prototype works for the first user and breaks for the fifty-first; the auth flow looks clean in a single-tab demo and ships a session-fixation bug the moment a second tab opens; the database query that returns in 40ms on the seed dataset is an N+1 disaster on the production table. None of those are LLM-specific failures — they show up in human-written code too — but they show up faster and more often when nobody on the team has done the reading-and-thinking pass that surfaces them. Code review is the formal name for that pass; vibe-coded production code with no review pass is the worst-case shape of the practice.

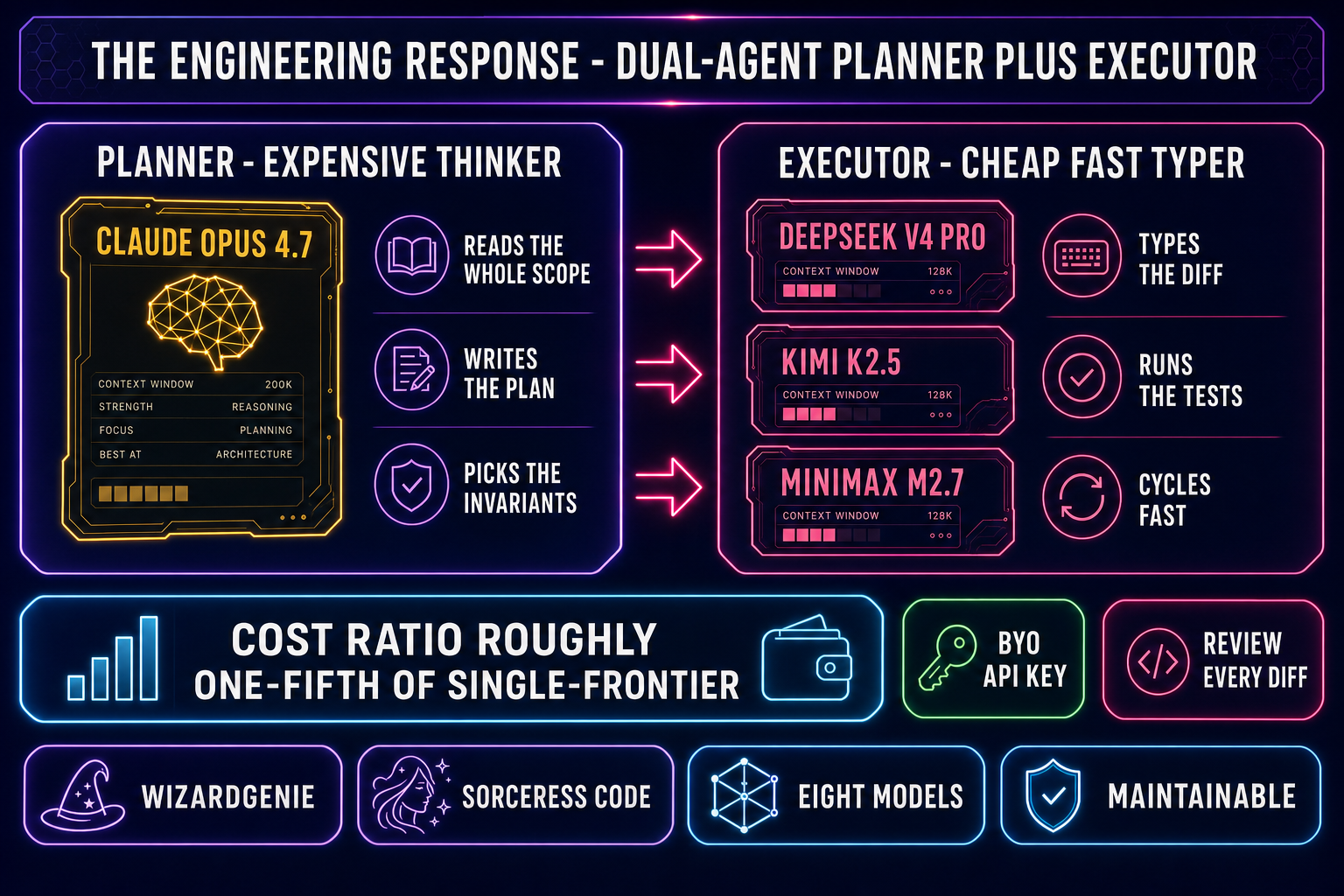

The Linux kernel is the cleanest counter-example to vibe coding without review and Torvalds is not subtle about that. The kernel’s submission process — patches posted to the lkml mailing list, multi-maintainer review, signed-off-by chains traceable to the contributor — is review discipline made institutional. He has stated publicly that he is not personally using AI-assisted coding for kernel work and that other maintainers experimenting with it privately is fine, but the patch will be judged the same way every other patch is. The kernel will absorb AI-generated code when, and only when, the patch demonstrably passes the same review bar a human-written patch faces. That is not anti-AI. It is anti-handing-the-keyboard-over-without-reading-the-output.