Search “will AI take over game development” in May 2026 and the first page of results splits in two: panic pieces about a profession on the way out, and breathless pieces about a finished game shipped by one person over a weekend. Neither matches the working day of the actual game devs shipping in 2026. The honest read is layered. AI has already taken over the asset and code-scaffolding layer of the pipeline; it has clearly not taken over level design, encounter pacing, narrative voice, or the polish-and-taste week that decides whether a prototype becomes a release. The Activision disclosure on Call of Duty: Black Ops 6 in February 2025 (first reported by The Verge) and Steam’s January 2026 clarification of its AI disclosure form together paint the official picture. The indie corner of vibe coding platforms paints the unofficial one. This post pulls them together. Verified May 16, 2026 against the Verge and Game Developer reports, the GDC 2026 State of the Industry survey, the Steam disclosure form changes, and the Sorceress tool catalog in src/app/_home-v2/_data/tools.ts.

What “will AI take over game development” actually asks

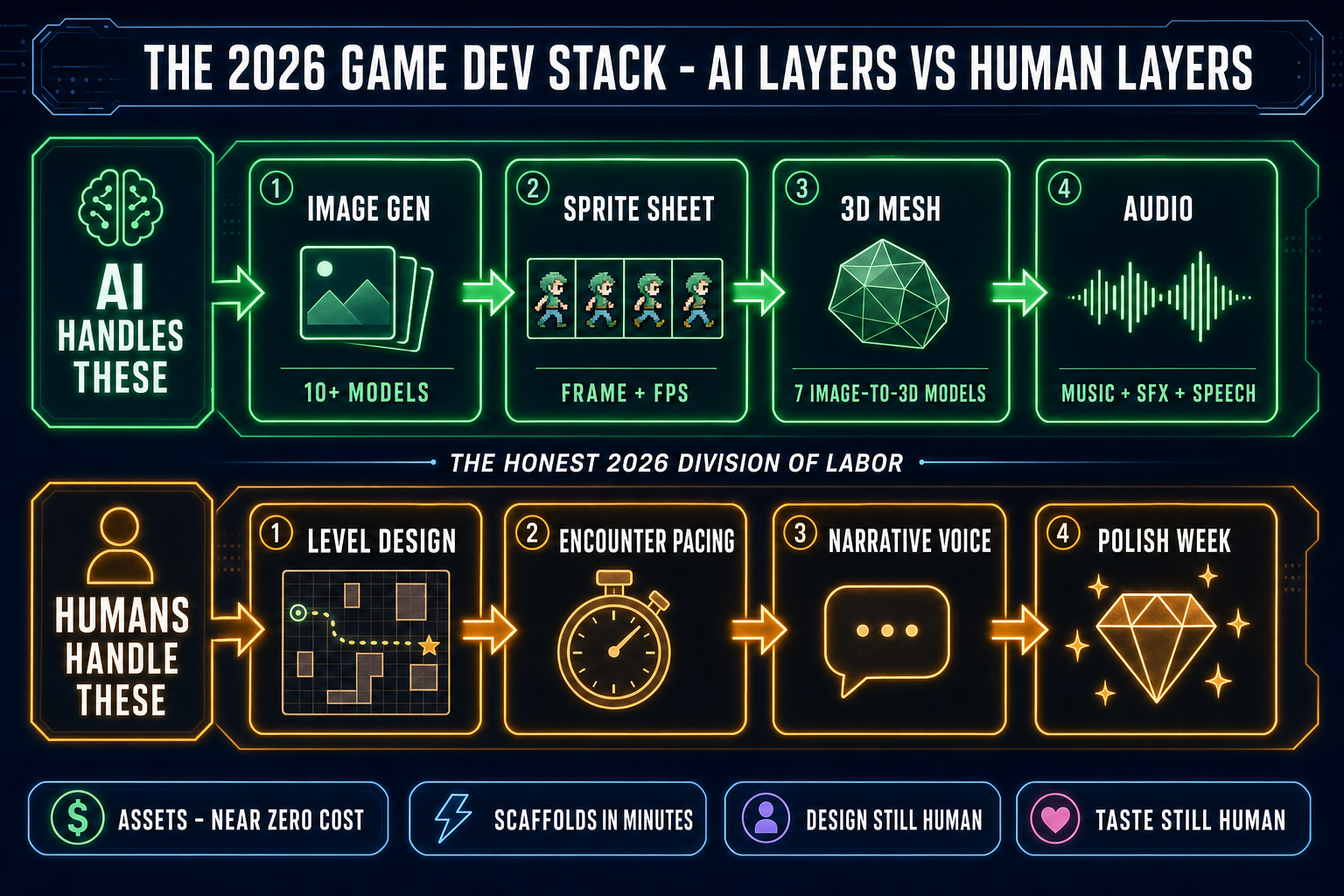

The query is doing two jobs at once. The first job is the existential one — the new dev wondering whether to start a five-year game-dev career path right as the technology that automates parts of it is going vertical. The second job is the operational one — the current dev wondering which parts of their day are about to change shape. Both jobs are legitimate. The answer that helps both of them is the same answer: AI has not taken over game development as a whole, but it has taken over specific layers of the pipeline. Knowing which layers tells you which career bets to make in 2026 and which parts of the day to spend learning a new tool instead of doing it the old way. The shape of the takeover is layer-by-layer, not job-by-job.

The empirical proof that AI is not running the show is on the storefronts. Open the Steam new-release sidebar on any week in 2026 and the standout releases are still games whose teams used AI for parts of the production, not games whose teams replaced themselves with AI. Itch.io’s top weekly grossing slot in any 7-day window is a mix of hand-crafted pixel-art titles and AI-assisted prototypes; no slot is held by a fully automated game. That pattern matters because storefronts are the place where the takeover would show up first if it were happening — users buy what they like and that selection process is brutally honest. The other place it would show up is in studio org charts, and the GDC 2026 State of the Industry survey gives a clear read there too: layoffs are widespread (one in four developers in the last two years, 33% in the US) but respondents themselves attributed them primarily to restructuring (43%), budget cuts (38%), and project cancellations (32%), not AI replacement. AI shows up in the survey as a topic, not as the cause.

Where AI has already taken over — assets first, code second

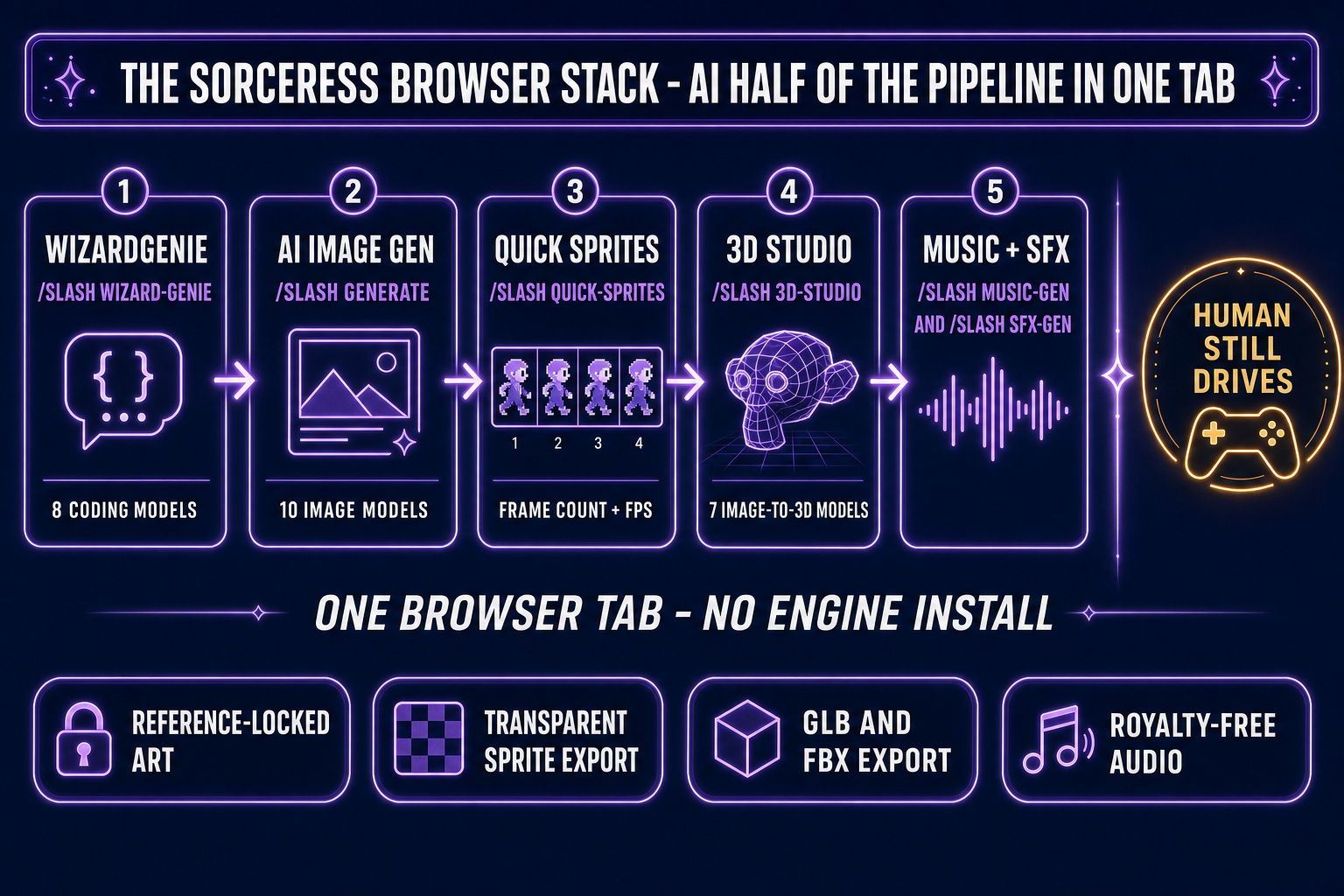

Four layers are now meaningfully AI-run for any team that wants them to be. The asset layer is the cleanest sweep. Images — characters, environments, props, UI elements — come out of AI Image Gen at /generate with reference-image conditioning so the same wizard stays on-model across eight poses, eight outfits, or eight expressions without each render drifting. Sprite sheets — the packed grids of frames that drive 2D animation — come out of Quick Sprites at /quick-sprites with frame count, FPS, palette quantization, and transparent-background controls, in less time than it takes to find the right pixel-art tutorial. 3D meshes come out of 3D Studio at /3d-studio using one of seven image-to-3D models (Meshy 6, Meshy 5, Rodin 2.0, TRELLIS, TRELLIS 2, Tripo v3.1, Hunyuan 3D 3.1) with PBR materials and an auto-rigging path that exports to glTF 2.0 or FBX. Audio — music loops, sound effects, sometimes voice when the licensing is clean — comes out of Music Gen and SFX Gen with the dev never leaving the browser tab.

The fifth layer is gameplay code, and the answer is “mostly yes, with caveats.” A vibe-coding session on WizardGenie — the surface where the asset stack and the code editor share a browser tab — turns a one-paragraph brief into a runnable prototype in the time it takes the model to draft the diff. The lift from prompt to playable shape is real, and at the indie scale it is the difference between shipping a jam game and not. The caveats are also real and worth naming honestly: the resulting code needs human review of the architectural decisions, the data model, and the long-tail edge cases. Save state, multiplayer netcode, accessibility, and crash-recovery paths are where AI-scaffolded code reliably underestimates the work that remains. The post on cursor vibe coding and the replit vibe coding breakdown both unpack the same gap from different vendor angles.

Where AI has clearly not taken over game development

Level design is the cleanest example. The AI scaffolds the loop — player controller, camera, collision, basic enemy — but the moment-to-moment shape of a good level (rhythm of empty space and pressure, the timing of an unlock, the placement of a hidden path, the sightline that lets a player feel smart) is still built on hours of human playtest data and a designer who has internalized what fun feels like in that genre. Encounter pacing is the same. An AI can populate an arena with reasonable enemy compositions; it cannot tell you which fifth encounter in the third dungeon is the one that breaks the difficulty curve, because the answer depends on what the actual human player will do at that point in the run. Narrative voice is the third. AI dialogue at the line level is workable in 2026; AI narrative voice consistent across an entire 30-hour RPG is not, and the gap is large enough that the small teams shipping AI-heavy RPGs in 2026 keep the writers on payroll.

The fourth gap is the polish week. The week (or six) that turns a prototype into a release candidate — cleaning up jank, tuning hit feedback, removing dialog dead-ends, balancing the late-game economy, adding the dozen small affordances that ship-ready games have and prototypes don’t — is where the “built in a weekend” demos consistently stop. Polish is the place where game devs earn their reputation and AI consistently produces a 6/10. The fifth gap is taste, which is harder to define and easier to feel. Players know the difference between a game whose creator cared about every screen and a game that was assembled. The taste-driven calls (cut the entire third act because it does not earn its weight, redesign the boss because the silhouette is unreadable at low resolution, swap the color palette because the wrong color reads as poison in this region) are still human work in 2026 and will be for a while.

Big-studio AI in 2026: Activision and the disclosure norm

The high-profile data point is Activision. In February 2025 Activision added a Steam disclosure to Call of Duty: Black Ops 6 reading “Our team uses generative AI tools to help develop some in game assets.” The disclosure was forced by months of player observation — the now-infamous six-fingered zombie Santa loading screen, weapon decals and prestige emblems with telltale artifacts, calling cards and a Zombies map logo that AI-detector tools flagged. The disclosure was a marker that the workflow had reached AAA, not that the franchise had been replaced by AI. The game is still designed by humans, balanced by humans, voice-acted by humans (the prior controversy over the SAG-AFTRA strike notwithstanding), and shipped by humans. AI assisted the asset pipeline; AI did not write the game.

The platform-level response settled in January 2026. Valve clarified the Steam AI disclosure form to draw a clean line: developers no longer need to disclose use of AI-powered tools for workflow efficiency (code helpers, asset-pipeline assistants, development environments with AI built in), because that category is “not the focus of this section.” The disclosure rules still apply to two player-facing categories: (1) generative AI used to produce content shipped in the game — artwork, sound, narrative, localization — and (2) generative AI that produces content live during gameplay (runtime AI text or images). Marketing assets and Steam store pages also fall under disclosure. Game Developer covered the same disclosure tweak the same week. The net effect: the industry norm in 2026 is “workflow AI is fine, content AI must be disclosed.”